The fifth meeting of our Phil Stat Forum*:

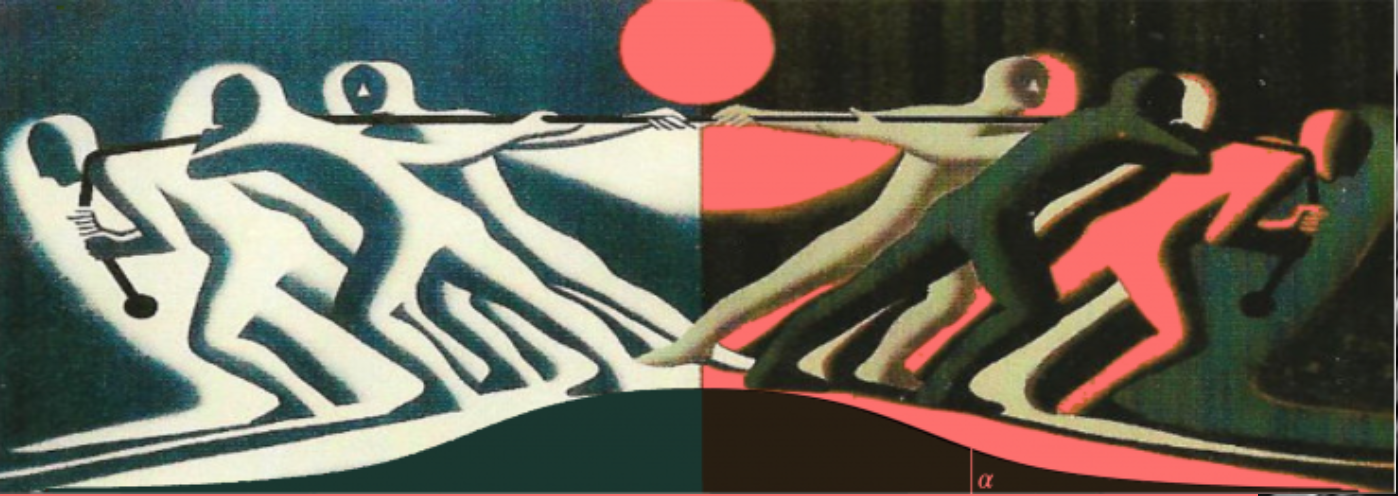

The Statistics Wars

and Their Casualties

TIME: 15:00-16:45 (London); 10-11:45 a.m. (New York, EST)

.

“How can we improve replicability?”

The fifth meeting of our Phil Stat Forum*:

The Statistics Wars

and Their Casualties

TIME: 15:00-16:45 (London); 10-11:45 a.m. (New York, EST)

.

“How can we improve replicability?”

Stephen Senn

Consultant Statistician

Edinburgh, Scotland

Although I have researched on clinical trial design for many years, prior to the COVID-19 epidemic I had had nothing to do with vaccines. The only object of these amateur musings is to amuse amateurs by raising some issues I have pondered and found interesting. Continue reading

A little over a year ago, the board of the American Statistical Association (ASA) appointed a new Task Force on Statistical Significance and Replicability (under then president, Karen Kafadar), to provide it with recommendations. [Its members are here (i).] You might remember my blogpost at the time, “Les Stats C’est Moi”. The Task Force worked quickly, despite the pandemic, giving its recommendations to the ASA Board early, in time for the Joint Statistical Meetings at the end of July 2020. But the ASA hasn’t revealed the Task Force’s recommendations, and I just learned yesterday that it has no plans to do so*. A panel session I was in at the JSM, (P-values and ‘Statistical Significance’: Deconstructing the Arguments), grew out of this episode, and papers from the proceedings are now out. The introduction to my contribution gives you the background to my question, while revealing one of the recommendations (I only know of 2). Continue reading

The fourth meeting of our New Phil Stat Forum*:

The Statistics Wars

and Their Casualties

January 7, 16:00 – 17:30 (London time)

11 am-12:30 pm (New York, ET)**

**note time modification and date change

Putting the Brakes on the Breakthrough,

or “How I used simple logic to uncover a flaw in a controversial 60-year old ‘theorem’ in statistical foundations”

Deborah G. Mayo

.