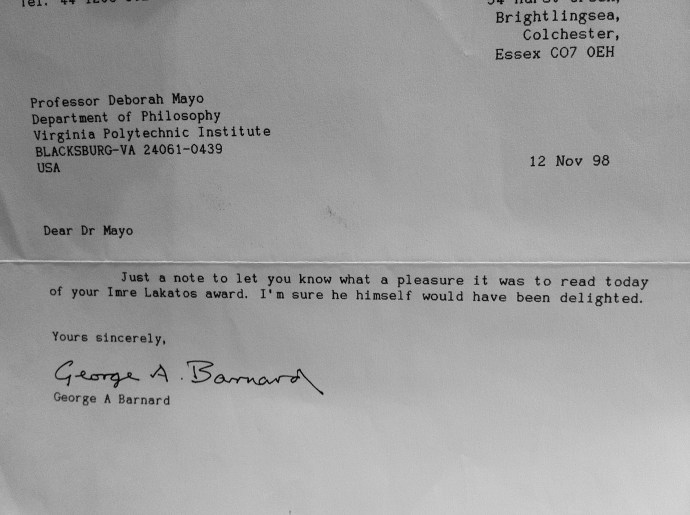

George Barnard sent me this note on hearing of my Lakatos Prize. He was to have been at my Lakatos dinner at the LSE (March 17, 1999)—winners are permitted to invite ~2-3 guests—but he called me at the LSE at the last minute to say he was too ill to come to London. Instead we had a long talk on the phone the next day, which I can discuss at some point.

George Barnard sent me this note on hearing of my Lakatos Prize. He was to have been at my Lakatos dinner at the LSE (March 17, 1999)—winners are permitted to invite ~2-3 guests—but he called me at the LSE at the last minute to say he was too ill to come to London. Instead we had a long talk on the phone the next day, which I can discuss at some point.

Monthly Archives: September 2012

Levels of Inquiry

Many fallacious uses of statistical methods result from supposing that the statistical inference licenses a jump to a substantive claim that is ‘on a different level’ from a statistical one being probed. Given the familiar refrain that statistical significance is not substantive significance, it may seem surprising how often criticisms of significance tests depend on running the two together! But it is not just two, but a great many levels that need distinguishing linking collecting, modeling and analyzing data to a variety of substantive claims of inquiry (though for simplicity I often focus on the three depicted, described in various ways).

A question that continues to arise revolves around a blurring of levels, and is behind my recent ESP post. It goes roughly like this:

If we are prepared to take a statistically significant proportion of successes (greater than .5) in n Binomial trials as grounds for inferring a real (better than chance) effect (perhaps of two teaching methods) but not as grounds for inferring Uri’s ESP (at guessing outcomes, say), then aren’t we implicitly invoking a difference in prior probabilities? The answer is no, but there are two very different points to be made:

First, merely finding evidence of a non-chance effect is at a different “level” from a subsequent question about the explanation or cause of a non-chance effect. To infer from the former to the latter is an example of a fallacy of rejection.[1] The nature and threats of error in the hypothesis about a specific cause of an effect are very different from those in merely inferring a real effect. There are distinct levels of inquiry and distinct errors at each given level. The severity analysis for the respective claims makes this explicit.[ii] Even a test that did a good job distinguishing and ruling out threats to a hypothesis of “mere chance” would not thereby have probed errors about specific causes or potential explanations. Nor does an “isolated record” of statistically significant results suffice. Recall Fisher: “In relation to the test of significance, we may say that a phenomenon is experimentally demonstrable when we know how to conduct an experiment which will rarely fail to give us a statistically significant result”(1935, 14). PSI researchers never managed to demonstrate this. Continue reading

Insevere tests and pseudoscience

Against the PSI skeptics of this period (discussed in my last post), defenders of PSI would often erect means to take experimental results as success stories (e.g., if he failed to correctly predict the next card, maybe he was aiming at the second or third card). If the data could not be made to fit some ESP claim or other (e.g., through multiple end points) it might, as a last resort, be explained away as due to negative energy of nonbelievers (or being on the Carson show). They manage to get their ESP hypothesis H to “pass,” but the “test” had little or no capability of finding (uncovering, admitting) the falsity of H, even if H is false. (This is the basis for my term “Gellerization”.) In such cases, I would deny that the results afford any evidence for H. They are terrible evidence for H. Now any domain will have some terrible tests, but a field that routinely passes off terrible tests as success stories I would deem pseudoscientific.

Against the PSI skeptics of this period (discussed in my last post), defenders of PSI would often erect means to take experimental results as success stories (e.g., if he failed to correctly predict the next card, maybe he was aiming at the second or third card). If the data could not be made to fit some ESP claim or other (e.g., through multiple end points) it might, as a last resort, be explained away as due to negative energy of nonbelievers (or being on the Carson show). They manage to get their ESP hypothesis H to “pass,” but the “test” had little or no capability of finding (uncovering, admitting) the falsity of H, even if H is false. (This is the basis for my term “Gellerization”.) In such cases, I would deny that the results afford any evidence for H. They are terrible evidence for H. Now any domain will have some terrible tests, but a field that routinely passes off terrible tests as success stories I would deem pseudoscientific.

We get a kind of minimal requirement for a test result to afford any evidence of assertion H, however partial and approximate H may be: If a hypothesis H is assured of having* “passed” a test T, even if H is false, then test T is a terrible test or no test at all.**

Far from trying to reveal flaws, it masks them or prevents them from being uncovered. No one would be impressed to learn their bank had passed a “stress test” if it turns out that the test had little or no chance of giving a failing score to any bank, regardless of its ability to survive a stressed economy. (Would they?)

There are a million different ways to flesh out the idea, and I welcome hearing others. Now you might say that no one would disagree with this. Great. Because a core requirement for an adequate account of inquiry, as I see it, is that it be able to capture this rationale for pretty terrible evidence and fairly pseudoscientific inquiry– and it should do so in such a way that affords a starting point for not-so-awful tests, and rather reliable learning.

* or very probably would have passed.

**QUESTION: I seek your input: which sounds better, or is more accurate: saying a test T passes a hypothesis H, or that a hypothesis H passes a test T? I’ve used both and want to settle on one.

Statistics and ESP research (Diaconis)

In the early ‘80s, fresh out of graduate school, I persuaded Persi Diaconis, Jack Good, and Patrick Suppes to participate in a session I wanted to organize on ESP and statistics. It seems remarkable to me now—not only that they agreed to participate*, but the extent that PSI research was taken seriously at the time. It wasn’t much later that all the recurring errors and loopholes, and the persistent cheating self-delusion —despite earnest attempts to trigger and analyze the phenomena—would lead many nearly everyone to label PSI research a “degenerating programme” (in the Popperian-Lakatosian sense).

(Though I’d have to check names and dates, I seem to recall that the last straw was when some of the Stanford researchers were found guilty of (unconscious) fraud. Jack Good continued to be interested in the area, but less so, I think. I do not know about the others.)

It is interesting to see how background information enters into inquiry here. So, even though it’s late on a Saturday night, here’s a snippet from one of the papers that caught my interest in graduate school: Diaconis’s (1978) “Statistical Problems in ESP Research“, in Science, along with some critical “letters”

Summary. In search of repeatable ESP experiments, modern investigators are using more complex targets, richer and freer responses, feedback, and more naturalistic conditions. This makes tractable statistical models less applicable. Moreover, controls often are so loose that no valid statistical analysis is possible. Some common problems are multiple end points, subject cheating, and unconscious sensory cueing. Unfortunately, such problems are hard to recognize from published records of the experiments in which they occur; rather, these problems are often uncovered by reports of independent skilled observers who were present during the experiment. This suggests that magicians and psychologists be regularly used as observers. New statistical ideas have been developed for some of the new experiments. For example, many modern ESP studies provide subjects with feedback—partial information about previous guesses—to reward the subjects for correct guesses in hope of inducing ESP learning. Some feedback experiments can be analyzed with the use of skill-scoring, a statistical procedure that depends on the information available and the way the guessing subject uses this information. (p. 131) Continue reading

Barnard, background info/ intentions

G.A. Barnard’s birthday is 9/23, so, here’s a snippet of his discussion with Savage (1962) (link below [i]) that connects to our 2 recent issues: stopping rules, and background information here and here (at least of one type).

Barnard: I have been made to think further about this issue of the stopping rule since I first suggested that the stopping rule was irrelevant (Barnard 1947a,b). This conclusion does not follow only from the subjective theory of probability; it seems to me that the stopping rule is irrelevant in certain circumstances. Since 1947 I have had the great benefit of a long correspondence—not many letters because they were not very frequent, but it went on over a long time—with Professor Bartlett, as a result of which I am considerably clearer than I was before. My feeling is that, as I indicated [on p. 42], we meet with two sorts of situation in applying statistics to data One is where we want to have a single hypothesis with which to confront the data. Do they agree with this hypothesis or do they not? Now in that situation you cannot apply Bayes’s theorem because you have not got any alternatives to think about and specify—not yet. I do not say they are not specifiable—they are not specified yet. And in that situation it seems to me the stopping rule is relevant. Continue reading

More on using background info

For the second* bit of background on the use of background info (for the new U-Phil for

For the second* bit of background on the use of background info (for the new U-Phil for 9/21/12 9/25/12, I’ll reblog:

Background Knowledge: Not to Quantify, But To Avoid Being Misled By, Subjective Beliefs

…I am discovering that one of the biggest sources of confusion about the foundations of statistics has to do with what it means or should mean to use “background knowledge” and “judgment” in making statistical and scientific inferences. David Cox and I address this in our “Conversation” in RMM (2011)….

Insofar as humans conduct science and draw inferences, and insofar as learning about the world is not reducible to a priori deductions, it is obvious that “human judgments” are involved. True enough, but too trivial an observation to help us distinguish among the very different ways judgments should enter according to contrasting inferential accounts. When Bayesians claim that frequentists do not use or are barred from using background information, what they really mean is that frequentists do not use prior probabilities of hypotheses, at least when those hypotheses are regarded as correct or incorrect, if only approximately. So, for example, we would not assign relative frequencies to the truth of hypotheses such as (1) prion transmission is via protein folding without nucleic acid, or (2) the deflection of light is approximately 1.75” (as if, as Pierce puts it, “universes were as plenty as blackberries”). How odd it would be to try to model these hypotheses as themselves having distributions: to us, statistical hypotheses assign probabilities to outcomes or values of a random variable. Continue reading

U-Phil (9/25/12) How should “prior information” enter in statistical inference?

Andrew Gelman, sent me an interesting note of his, “Ethics and the statistical use of prior information,”[i]. In section 3 he comments on some of David Cox’s remarks in a conversation we recorded:

Andrew Gelman, sent me an interesting note of his, “Ethics and the statistical use of prior information,”[i]. In section 3 he comments on some of David Cox’s remarks in a conversation we recorded:

“A Statistical Scientist Meets a Philosopher of Science: A Conversation between Sir David Cox and Deborah Mayo,“ published in Rationality, Markets and Morals [iii] (Section 2 has some remarks on L. Wasserman.)

This was a part of a highly informal, frank, and entirely unscripted conversation, with minimal editing from the tape-recording [ii]. It was first posted on this blog on Oct. 19, 2011. A related, earlier discussion on Gelman’s blog is here.

I want to open this for your informal comments ( “U-Phil”, ~750 words,by September 21 25)[iv]. (send to error@vt.edu)

Before I give my own “deconstruction” of Gelman on the relevant section, I will post a bit of background to the question of background. For starters, here’s the relevant portion of the conversation:

COX: Deborah, in some fields foundations do not seem very important, but we both think foundations of statistical inference are important; why do you think that is?

MAYO: I think because they ask about fundamental questions of evidence, inference, and probability. I don’t think that foundations of different fields are all alike; because in statistics we’re so intimately connected to the scientific interest in learning about the world, we invariably cross into philosophical questions about empirical knowledge and inductive inference.

COX: One aspect of it is that it forces us to say what it is that we really want to know when we analyze a situation statistically. Do we want to put in a lot of information external to the data, or as little as possible. It forces us to think about questions of that sort.

MAYO: But key questions, I think, are not so much a matter of putting in a lot or a little information. …What matters is the kind of information, and how to use it to learn. This gets to the question of how we manage to be so successful in learning about the world, despite knowledge gaps, uncertainties and errors. To me that’s one of the deepest questions and it’s the main one I care about. I don’t think a (deductive) Bayesian computation can adequately answer it. Continue reading

Return to the comedy hour…(on significance tests)

These days, so many theater productions are updated reviews of older standards. Same with the comedy hours at the Bayesian retreat, and task force meetings of significance test reformers. So (on the 1-year anniversary of this blog) let’s listen in to one of the earliest routines (with highest blog hits), but with some new reflections (first considered here and here).

These days, so many theater productions are updated reviews of older standards. Same with the comedy hours at the Bayesian retreat, and task force meetings of significance test reformers. So (on the 1-year anniversary of this blog) let’s listen in to one of the earliest routines (with highest blog hits), but with some new reflections (first considered here and here).

‘ “Did you hear the one about the frequentist . . .

“who claimed that observing “heads” on a biased coin that lands heads with probability .05 is evidence of a statistically significant improvement over the standard treatment of diabetes, on the grounds that such an event occurs with low probability (.05)?”

The joke came from J. Kadane’s Principles of Uncertainty (2011, CRC Press*).

“Flip a biased coin that comes up heads with probability 0.95, and tails with probability 0.05. If the coin comes up tails reject the null hypothesis. Since the probability of rejecting the null hypothesis if it is true is 0.05, this is a valid 5% level test. It is also very robust against data errors; indeed it does not depend on the data at all. It is also nonsense, of course, but nonsense allowed by the rules of significance testing.” (439)

Much laughter.

___________________

But is it allowed? I say no. The null hypothesis in the joke can be in any field, perhaps it concerns mean transmission of Scrapie in mice (as in my early Kuru post). I know some people view significance tests as merely rules that rarely reject erroneously, but I claim this is mistaken. Both in significance tests and in scientific hypothesis testing more generally, data indicate inconsistency with H only by being counter to what would be expected under the assumption that H is correct (as regards a given aspect observed). Were someone to tell Prusiner that the testing methods he follows actually allow any old “improbable” event (a stock split in Apple?) to reject a hypothesis about prion transmission rates, Prusiner would say that person didn’t understand the requirements of hypothesis testing in science. Since the criticism would hold no water in the analogous case of Prusiner’s test, it must equally miss its mark in the case of significance tests**. That, recall, was Rule #1. Continue reading

Stephen Senn: The nuisance parameter nuisance

Stephen Senn

Competence Centre for Methodology and Statistics

CRP Santé

Strassen, Luxembourg

“The nuisance parameter nuisance”

A great deal of statistical debate concerns ‘univariate’ error, or disturbance, terms in models. I put ‘univariate’ in inverted commas because as soon as one writes a model of the form (say) Yi =Xiβ + Єi, i = 1 … n and starts to raise questions about the distribution of the disturbance terms, Єi one is frequently led into multivariate speculations, such as, ‘is the variance identical for every disturbance term?’ and, ‘are the disturbance terms independent?’ and not just speculations such as, ‘is the distribution of the disturbance terms Normal?’. Aris Spanos might also want me to put inverted commas around ‘disturbance’ (or ‘error’) since what I ought to be thinking about is the joint distribution of the outcomes, Yi conditional on the predictors.

However, in my statistical world of planning and analysing clinical trials, the differences made to inferences according to whether one uses parametric versus non-parametric methods is often minor. Of course, using non-parametric methods does nothing to answer the problem of non-independent observations but for experiments, as opposed to observational studies, you can frequently design-in independence. That is a major potential pitfall avoided but then there is still the issue of Normality. However, in my experience, this is rarely where the action is. Inferences rarely change dramatically on using ‘robust’ approaches (although one can always find examples with gross-outliers where they do). However, there are other sorts of problem that can affect data which can make a very big difference. Continue reading

After dinner Bayesian comedy hour….

Given it’s the first anniversary of this blog, which opened with the howlers in “Overheard at the comedy hour …” let’s listen in as a Bayesian holds forth on one of the most famous howlers of the lot: the mysterious role that psychological intentions are said to play in frequentist methods such as statistical significance tests. Here it is, essentially as I remember it (though shortened), in the comedy hour that unfolded at my dinner table at an academic conference:

Given it’s the first anniversary of this blog, which opened with the howlers in “Overheard at the comedy hour …” let’s listen in as a Bayesian holds forth on one of the most famous howlers of the lot: the mysterious role that psychological intentions are said to play in frequentist methods such as statistical significance tests. Here it is, essentially as I remember it (though shortened), in the comedy hour that unfolded at my dinner table at an academic conference:

Did you hear the one about the researcher who gets a phone call from the guy analyzing his data? First the guy congratulates him and says, “The results show a statistically significant difference at the .05 level—p-value .048.” But then, an hour later, the phone rings again. It’s the same guy, but now he’s apologizing. It turns out that the experimenter intended to keep sampling until the result was 1.96 standard deviations away from the 0 null—in either direction—so they had to reanalyze the data (n=169), and the results were no longer statistically significant at the .05 level.

Much laughter.

So the researcher is tearing his hair out when the same guy calls back again. “Congratulations!” the guy says. “I just found out that the experimenter actually had planned to take n=169 all along, so the results are statistically significant.”

Howls of laughter.

But then the guy calls back with the bad news . . .

It turns out that failing to score a sufficiently impressive effect after n’ trials, the experimenter went on to n” trials, and so on and so forth until finally, say, on trial number 169, he obtained a result 1.96 standard deviations from the null.

It continues this way, and every time the guy calls in and reports a shift in the p-value, the table erupts in howls of laughter! From everyone except me, sitting in stunned silence, staring straight ahead. The hilarity ensues from the idea that the experimenter’s reported psychological intentions about when to stop sampling is altering the statistical results. Continue reading