All: On July 30 (10am EST) I will give a virtual version of my JSM presentation, remotely like the one I will actually give on Aug 6 at the JSM. Co-panelist Stan Young may as well. One of our surprise guests tomorrow (not at the JSM) will be Yoav Benjamini! If you’re interested in attending our July 30 practice session* please follow the directions here. Background items for this session are in the “readings” and “memos” of session 5.

*unless you’re already on our LSE Phil500 list

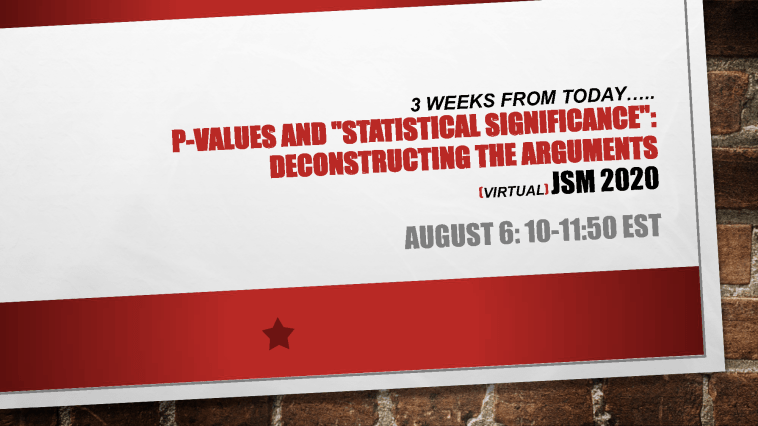

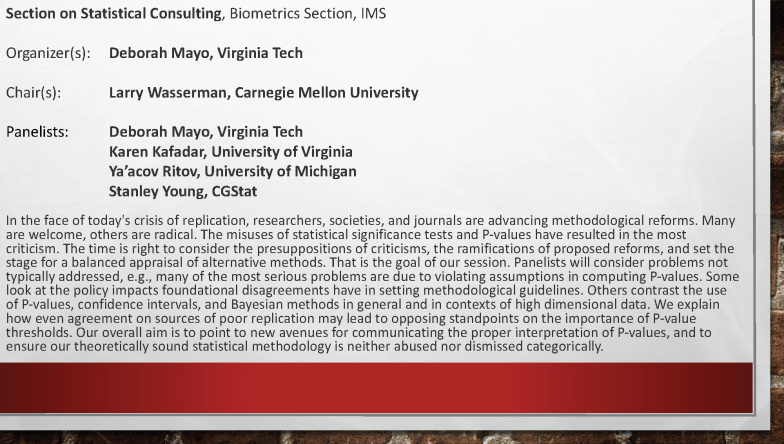

JSM 2020 Panel Flyer (PDF)

JSM online program w/panel abstract & information): Continue reading