.For the second year in a row, unlike the previous 9 years that I’ve been blogging, it’s not feasible to actually revisit that spot in the road, looking to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. Because of the extended pandemic, I am not going out this New Year’s Eve again, so the best I can hope for is a zoom link of the sort I received last year, not long before midnight– that will link me to a hypothetical party with him. (The pic on the left is the only blurry image I have of the club I’m taken to.) I just keep watching my email, to see if a zoom link arrives. My book Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (CUP, 2018) doesn’t include the argument from my article in Statistical Science (“On the Birnbaum Argument for the Strong Likelihood Principle”), but you can read it at that link along with commentaries by A. P. David, Michael Evans, Martin and Liu, D. A. S. Fraser (who sadly passed away in 2021), Jan Hannig, and Jan Bjornstad but there’s much in it that I’d like to discuss with him. The (Strong) Likelihood Principle (LP or SLP)–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics and statistical significance testing in general. Continue reading

Birnbaum Brakes

Midnight With Birnbaum (Remote, Virtual Happy New Year 2021)!

Next Phil Stat Forum: January 7: D. Mayo: Putting the Brakes on the Breakthrough (or “How I used simple logic to uncover a flaw in …..statistical foundations”)

The fourth meeting of our New Phil Stat Forum*:

The Statistics Wars

and Their Casualties

January 7, 16:00 – 17:30 (London time)

11 am-12:30 pm (New York, ET)**

**note time modification and date change

Putting the Brakes on the Breakthrough,

or “How I used simple logic to uncover a flaw in a controversial 60-year old ‘theorem’ in statistical foundations”

Deborah G. Mayo

.

Midnight With Birnbaum (Remote, Virtual Happy New Year 2020)!

Unlike in the past 9 years since I’ve been blogging, I can’t revisit that spot in the road outside the Elbar Room, looking to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. Because of the pandemic, I refuse to go out this New Year’s Eve, so the best I can hope for is a zoom link that will take me to a hypothetical party with him. (The pic on the left is the only blurry image I have of the club I’m taken to.) I just keep watching my email, to see if a zoom link arrives. My book Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (STINT 2018) doesn’t rehearse the argument from my Birnbaum article, but there’s much in it that I’d like to discuss with him. The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics and statistical significance testing in general. Let’s hope that in 2021 the American Statistical Association 9ASA) will finally reveal the recommendations from the ASA Task Force on Statistical Significance and Replicability that the ASA Board itself created one year ago. They completed their recommendations early–back at the end of July 2020–but no response from the ASA has been forthcoming (to my knowledge). As Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. I purport to give one in SIST 2018. Maybe it will come to fruition in 2021? Anyway, I was just sent an internet link–but it’s not zoom, not Skype, not Webinex, or anything I’ve ever seen before….no time to describe it now, but I’m recording and the rest of the transcript is live; this year there are some new, relevant additions. Happy New Year! Continue reading

Unlike in the past 9 years since I’ve been blogging, I can’t revisit that spot in the road outside the Elbar Room, looking to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. Because of the pandemic, I refuse to go out this New Year’s Eve, so the best I can hope for is a zoom link that will take me to a hypothetical party with him. (The pic on the left is the only blurry image I have of the club I’m taken to.) I just keep watching my email, to see if a zoom link arrives. My book Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (STINT 2018) doesn’t rehearse the argument from my Birnbaum article, but there’s much in it that I’d like to discuss with him. The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics and statistical significance testing in general. Let’s hope that in 2021 the American Statistical Association 9ASA) will finally reveal the recommendations from the ASA Task Force on Statistical Significance and Replicability that the ASA Board itself created one year ago. They completed their recommendations early–back at the end of July 2020–but no response from the ASA has been forthcoming (to my knowledge). As Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. I purport to give one in SIST 2018. Maybe it will come to fruition in 2021? Anyway, I was just sent an internet link–but it’s not zoom, not Skype, not Webinex, or anything I’ve ever seen before….no time to describe it now, but I’m recording and the rest of the transcript is live; this year there are some new, relevant additions. Happy New Year! Continue reading

A Perfect Time to Binge Read the (Strong) Likelihood Principle

An essential component of inference based on familiar frequentist notions: p-values, significance and confidence levels, is the relevant sampling distribution (hence the term sampling theory, or my preferred error statistics, as we get error probabilities from the sampling distribution). This feature results in violations of a principle known as the strong likelihood principle (SLP). To state the SLP roughly, it asserts that all the evidential import in the data (for parametric inference within a model) resides in the likelihoods. If accepted, it would render error probabilities irrelevant post data. Continue reading

Next Phil Stat Forum: January 7: D. Mayo: Putting the Brakes on the Breakthrough (or “How I used simple logic to uncover a flaw in …..statistical foundations”)

The fourth meeting of our New Phil Stat Forum*:

The Statistics Wars

and Their Casualties

January 7, 16:00 – 17:30 (London time)

11 am-12:30 pm (New York, ET)**

**note time modification and date change

Putting the Brakes on the Breakthrough,

or “How I used simple logic to uncover a flaw in a controversial 60-year old ‘theorem’ in statistical foundations”

Deborah G. Mayo

.

HOW TO JOIN US: SEE THIS LINK

ABSTRACT: An essential component of inference based on familiar frequentist (error statistical) notions p-values, statistical significance and confidence levels, is the relevant sampling distribution (hence the term sampling theory). This results in violations of a principle known as the strong likelihood principle (SLP), or just the likelihood principle (LP), which says, in effect, that outcomes other than those observed are irrelevant for inferences within a statistical model. Now Allan Birnbaum was a frequentist (error statistician), but he found himself in a predicament: He seemed to have shown that the LP follows from uncontroversial frequentist principles! Bayesians, such as Savage, heralded his result as a “breakthrough in statistics”! But there’s a flaw in the “proof”, and that’s what I aim to show in my presentation by means of 3 simple examples:

- Example 1: Trying and Trying Again

- Example 2: Two instruments with different precisions

(you shouldn’t get credit/blame for something you didn’t do) - The Breakthrough: Don’t Birnbaumize that data my friend

As in the last 9 years, I will post an imaginary dialogue with Allan Birnbaum at the stroke of midnight, New Year’s Eve, and this will be relevant for the talk.

The Phil Stat Forum schedule is at the Phil-Stat-Wars.com blog

Midnight With Birnbaum (Happy New Year 2019)!

Just as in the past 8 years since I’ve been blogging, I revisit that spot in the road at 9p.m., just outside the Elbar Room, look to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wonder if the car will come for me this year, as I wait out in the cold, now that Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (STINT 2018) has been out over a year. STINT doesn’t rehearse the argument from my Birnbaum article, but there’s much in it that I’d like to discuss with him. The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). 2019 was the 61th birthday of Cox’s “weighing machine” example, which was the basis of Birnbaum’s attempted proof. Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2020? Anyway, the cab is finally here…the rest is live. Happy New Year! Continue reading

Just as in the past 8 years since I’ve been blogging, I revisit that spot in the road at 9p.m., just outside the Elbar Room, look to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wonder if the car will come for me this year, as I wait out in the cold, now that Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (STINT 2018) has been out over a year. STINT doesn’t rehearse the argument from my Birnbaum article, but there’s much in it that I’d like to discuss with him. The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). 2019 was the 61th birthday of Cox’s “weighing machine” example, which was the basis of Birnbaum’s attempted proof. Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2020? Anyway, the cab is finally here…the rest is live. Happy New Year! Continue reading

A Perfect Time to Binge Read the (Strong) Likelihood Principle

An essential component of inference based on familiar frequentist notions: p-values, significance and confidence levels, is the relevant sampling distribution (hence the term sampling theory, or my preferred error statistics, as we get error probabilities from the sampling distribution). This feature results in violations of a principle known as the strong likelihood principle (SLP). To state the SLP roughly, it asserts that all the evidential import in the data (for parametric inference within a model) resides in the likelihoods. If accepted, it would render error probabilities irrelevant post data. Continue reading

An essential component of inference based on familiar frequentist notions: p-values, significance and confidence levels, is the relevant sampling distribution (hence the term sampling theory, or my preferred error statistics, as we get error probabilities from the sampling distribution). This feature results in violations of a principle known as the strong likelihood principle (SLP). To state the SLP roughly, it asserts that all the evidential import in the data (for parametric inference within a model) resides in the likelihoods. If accepted, it would render error probabilities irrelevant post data. Continue reading

Midnight With Birnbaum (Happy New Year 2018)

Just as in the past 7 years since I’ve been blogging, I revisit that spot in the road at 9p.m., just outside the Elbar Room, look to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wonder if the car will come for me this year, as I wait out in the cold, now that Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (STINT) is out. STINT doesn’t rehearse the argument from my Birnbaum article, but there’s much in it that I’d like to discuss with him. The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). 2018 was the 60th birthday of Cox’s “weighing machine” example, which was the basis of Birnbaum’s attempted proof. Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2019? Anyway, the cab is finally here…the rest is live. Happy New Year! Continue reading

Just as in the past 7 years since I’ve been blogging, I revisit that spot in the road at 9p.m., just outside the Elbar Room, look to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wonder if the car will come for me this year, as I wait out in the cold, now that Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (STINT) is out. STINT doesn’t rehearse the argument from my Birnbaum article, but there’s much in it that I’d like to discuss with him. The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). 2018 was the 60th birthday of Cox’s “weighing machine” example, which was the basis of Birnbaum’s attempted proof. Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2019? Anyway, the cab is finally here…the rest is live. Happy New Year! Continue reading

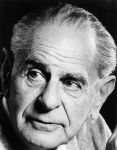

“Intentions (in your head)” is the code word for “error probabilities (of a procedure)”: Allan Birnbaum’s Birthday

Today is Allan Birnbaum’s Birthday. Birnbaum’s (1962) classic “On the Foundations of Statistical Inference,” in Breakthroughs in Statistics (volume I 1993), concerns a principle that remains at the heart of today’s controversies in statistics–even if it isn’t obvious at first: the Likelihood Principle (LP) (also called the strong likelihood Principle SLP, to distinguish it from the weak LP [1]). According to the LP/SLP, given the statistical model, the information from the data are fully contained in the likelihood ratio. Thus, properties of the sampling distribution of the test statistic vanish (as I put it in my slides from this post)! But error probabilities are all properties of the sampling distribution. Thus, embracing the LP (SLP) blocks our error statistician’s direct ways of taking into account “biasing selection effects” (slide #10). [Posted earlier here.] Interesting, as seen in a 2018 post on Neyman, Neyman did discuss this paper, but had an odd reaction that I’m not sure I understand. (Check it out.) Continue reading

Midnight With Birnbaum (Happy New Year 2017)

Just as in the past 6 years since I’ve been blogging, I revisit that spot in the road at 11p.m., just outside the Elbar Room, look to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wondered if the car would come for me this year, as I waited out in the cold, given that my Birnbaum article has been out since 2014. The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). 2018 will be the 60th birthday of Cox’s “weighing machine” example, which was the start of Birnbaum’s attempted proof. Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2018? Anyway, the cab is finally here…the rest is live. Happy New Year! Continue reading

Just as in the past 6 years since I’ve been blogging, I revisit that spot in the road at 11p.m., just outside the Elbar Room, look to get into a strange-looking taxi, to head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wondered if the car would come for me this year, as I waited out in the cold, given that my Birnbaum article has been out since 2014. The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). 2018 will be the 60th birthday of Cox’s “weighing machine” example, which was the start of Birnbaum’s attempted proof. Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2018? Anyway, the cab is finally here…the rest is live. Happy New Year! Continue reading

Midnight With Birnbaum (Happy New Year 2016)

Just as in the past 5 years since I’ve been blogging, I revisit that spot in the road at 11p.m., just outside the Elbar Room, get into a strange-looking taxi, and head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wonder if the car will come for me this year, given that my Birnbaum article has been out since 2014… The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2017? Anyway, it’s 6 hrs later here, so I’m about to leave for that spot in the road… If I’m picked up, I’ll add an update at the end.

Just as in the past 5 years since I’ve been blogging, I revisit that spot in the road at 11p.m., just outside the Elbar Room, get into a strange-looking taxi, and head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wonder if the car will come for me this year, given that my Birnbaum article has been out since 2014… The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2017? Anyway, it’s 6 hrs later here, so I’m about to leave for that spot in the road… If I’m picked up, I’ll add an update at the end.

You know how in that (not-so) recent Woody Allen movie, “Midnight in Paris,” the main character (I forget who plays it, I saw it on a plane) is a writer finishing a novel, and he steps into a cab that mysteriously picks him up at midnight and transports him back in time where he gets to run his work by such famous authors as Hemingway and Virginia Wolf? He is impressed when his work earns their approval and he comes back each night in the same mysterious cab…Well, imagine an error statistical philosopher is picked up in a mysterious taxi at midnight (New Year’s Eve 2011 2012, 2013, 2014, 2015, 2016) and is taken back fifty years and, lo and behold, finds herself in the company of Allan Birnbaum.[i] There are a couple of brief (12/31/14 & 15) updates at the end.

ERROR STATISTICIAN: It’s wonderful to meet you Professor Birnbaum; I’ve always been extremely impressed with the important impact your work has had on philosophical foundations of statistics. I happen to be writing on your famous argument about the likelihood principle (LP). (whispers: I can’t believe this!)

BIRNBAUM: Ultimately you know I rejected the LP as failing to control the error probabilities needed for my Confidence concept. Continue reading

Midnight With Birnbaum (Happy New Year)

Just as in the past 4 years since I’ve been blogging, I revisit that spot in the road at 11p.m., just outside the Elbar Room, get into a strange-looking taxi, and head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wonder if the car will come for me this year, given that my Birnbaum article has been out since 2014… The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2016? Anyway, it’s 6 hrs later here, so I’m about to leave for that spot in the road…

Just as in the past 4 years since I’ve been blogging, I revisit that spot in the road at 11p.m., just outside the Elbar Room, get into a strange-looking taxi, and head to “Midnight With Birnbaum”. (The pic on the left is the only blurry image I have of the club I’m taken to.) I wonder if the car will come for me this year, given that my Birnbaum article has been out since 2014… The (Strong) Likelihood Principle–whether or not it is named–remains at the heart of many of the criticisms of Neyman-Pearson (N-P) statistics (and cognate methods). Yet as Birnbaum insisted, the “confidence concept” is the “one rock in a shifting scene” of statistical foundations, insofar as there’s interest in controlling the frequency of erroneous interpretations of data. (See my rejoinder.) Birnbaum bemoaned the lack of an explicit evidential interpretation of N-P methods. Maybe in 2016? Anyway, it’s 6 hrs later here, so I’m about to leave for that spot in the road…

You know how in that (not-so) recent Woody Allen movie, “Midnight in Paris,” the main character (I forget who plays it, I saw it on a plane) is a writer finishing a novel, and he steps into a cab that mysteriously picks him up at midnight and transports him back in time where he gets to run his work by such famous authors as Hemingway and Virginia Wolf? He is impressed when his work earns their approval and he comes back each night in the same mysterious cab…Well, imagine an error statistical philosopher is picked up in a mysterious taxi at midnight (New Year’s Eve 2011 2012, 2013, 2014, 2015) and is taken back fifty years and, lo and behold, finds herself in the company of Allan Birnbaum.[i] There are a couple of brief (12/31/14 & 15) updates at the end.

ERROR STATISTICIAN: It’s wonderful to meet you Professor Birnbaum; I’ve always been extremely impressed with the important impact your work has had on philosophical foundations of statistics. I happen to be writing on your famous argument about the likelihood principle (LP). (whispers: I can’t believe this!)

BIRNBAUM: Ultimately you know I rejected the LP as failing to control the error probabilities needed for my Confidence concept.

ERROR STATISTICIAN: Yes, but I actually don’t think your argument shows that the LP follows from such frequentist concepts as sufficiency S and the weak conditionality principle WLP.[ii] Sorry,…I know it’s famous…

BIRNBAUM: Well, I shall happily invite you to take any case that violates the LP and allow me to demonstrate that the frequentist is led to inconsistency, provided she also wishes to adhere to the WLP and sufficiency (although less than S is needed).

ERROR STATISTICIAN: Well I happen to be a frequentist (error statistical) philosopher; I have recently (2006) found a hole in your proof,..er…well I hope we can discuss it.

BIRNBAUM: Well, well, well: I’ll bet you a bottle of Elba Grease champagne that I can demonstrate it! Continue reading

“Intentions” is the new code word for “error probabilities”: Allan Birnbaum’s Birthday

Today is Allan Birnbaum’s Birthday. Birnbaum’s (1962) classic “On the Foundations of Statistical Inference,” in Breakthroughs in Statistics (volume I 1993), concerns a principle that remains at the heart of today’s controversies in statistics–even if it isn’t obvious at first: the Likelihood Principle (LP) (also called the strong likelihood Principle SLP, to distinguish it from the weak LP [1]). According to the LP/SLP, given the statistical model, the information from the data are fully contained in the likelihood ratio. Thus, properties of the sampling distribution of the test statistic vanish (as I put it in my slides from my last post)! But error probabilities are all properties of the sampling distribution. Thus, embracing the LP (SLP) blocks our error statistician’s direct ways of taking into account “biasing selection effects” (slide #10).

Intentions is a New Code Word: Where, then, is all the information regarding your trying and trying again, stopping when the data look good, cherry picking, barn hunting and data dredging? For likelihoodists and other probabilists who hold the LP/SLP, it is ephemeral information locked in your head reflecting your “intentions”! “Intentions” is a code word for “error probabilities” in foundational discussions, as in “who would want to take intentions into account?” (Replace “intentions” (or the “researcher’s intentions”) with “error probabilities” (or the method’s error probabilities”) and you get a more accurate picture.) Keep this deciphering tool firmly in mind as you read criticisms of methods that take error probabilities into account[2]. For error statisticians, this information reflects real and crucial properties of your inference procedure.

Midnight With Birnbaum (Happy New Year)

Just as in the past 3 years since I’ve been blogging, I revisit that spot in the road at 11p.m.*,just outside the Elbar Room, get into a strange-looking taxi, and head to “Midnight With Birnbaum”. I wonder if they’ll come for me this year, given that my Birnbaum article is out… This is what the place I am taken to looks like. [It’s 6 hrs later here, so I’m about to leave…]

Just as in the past 3 years since I’ve been blogging, I revisit that spot in the road at 11p.m.*,just outside the Elbar Room, get into a strange-looking taxi, and head to “Midnight With Birnbaum”. I wonder if they’ll come for me this year, given that my Birnbaum article is out… This is what the place I am taken to looks like. [It’s 6 hrs later here, so I’m about to leave…]

You know how in that (not-so) recent movie, “Midnight in Paris,” the main character (I forget who plays it, I saw it on a plane) is a writer finishing a novel, and he steps into a cab that mysteriously picks him up at midnight and transports him back in time where he gets to run his work by such famous authors as Hemingway and Virginia Wolf? He is impressed when his work earns their approval and he comes back each night in the same mysterious cab…Well, imagine an error statistical philosopher is picked up in a mysterious taxi at midnight (New Year’s Eve 2011 2012, 2013, 2014) and is taken back fifty years and, lo and behold, finds herself in the company of Allan Birnbaum.[i] There are a couple of brief (12/31/14) updates at the end.

ERROR STATISTICIAN: It’s wonderful to meet you Professor Birnbaum; I’ve always been extremely impressed with the important impact your work has had on philosophical foundations of statistics. I happen to be writing on your famous argument about the likelihood principle (LP). (whispers: I can’t believe this!)

BIRNBAUM: Ultimately you know I rejected the LP as failing to control the error probabilities needed for my Confidence concept.

ERROR STATISTICIAN: Yes, but I actually don’t think your argument shows that the LP follows from such frequentist concepts as sufficiency S and the weak conditionality principle WLP.[ii] Sorry,…I know it’s famous…

BIRNBAUM: Well, I shall happily invite you to take any case that violates the LP and allow me to demonstrate that the frequentist is led to inconsistency, provided she also wishes to adhere to the WLP and sufficiency (although less than S is needed).

ERROR STATISTICIAN: Well I happen to be a frequentist (error statistical) philosopher; I have recently (2006) found a hole in your proof,..er…well I hope we can discuss it.

BIRNBAUM: Well, well, well: I’ll bet you a bottle of Elba Grease champagne that I can demonstrate it! Continue reading

Statistical Science: The Likelihood Principle issue is out…!

Abbreviated Table of Contents:

Abbreviated Table of Contents:

Here are some items for your Saturday-Sunday reading.

Here are some items for your Saturday-Sunday reading.

Link to complete discussion:

Mayo, Deborah G. On the Birnbaum Argument for the Strong Likelihood Principle (with discussion & rejoinder). Statistical Science 29 (2014), no. 2, 227-266.

Links to individual papers:

Mayo, Deborah G. On the Birnbaum Argument for the Strong Likelihood Principle. Statistical Science 29 (2014), no. 2, 227-239.

Dawid, A. P. Discussion of “On the Birnbaum Argument for the Strong Likelihood Principle”. Statistical Science 29 (2014), no. 2, 240-241.

Evans, Michael. Discussion of “On the Birnbaum Argument for the Strong Likelihood Principle”. Statistical Science 29 (2014), no. 2, 242-246.

Martin, Ryan; Liu, Chuanhai. Discussion: Foundations of Statistical Inference, Revisited. Statistical Science 29 (2014), no. 2, 247-251.

Fraser, D. A. S. Discussion: On Arguments Concerning Statistical Principles. Statistical Science 29 (2014), no. 2, 252-253.

Hannig, Jan. Discussion of “On the Birnbaum Argument for the Strong Likelihood Principle”. Statistical Science 29 (2014), no. 2, 254-258.

Bjørnstad, Jan F. Discussion of “On the Birnbaum Argument for the Strong Likelihood Principle”. Statistical Science 29 (2014), no. 2, 259-260.

Mayo, Deborah G. Rejoinder: “On the Birnbaum Argument for the Strong Likelihood Principle”. Statistical Science 29 (2014), no. 2, 261-266.

Abstract: An essential component of inference based on familiar frequentist notions, such as p-values, significance and confidence levels, is the relevant sampling distribution. This feature results in violations of a principle known as the strong likelihood principle (SLP), the focus of this paper. In particular, if outcomes x∗ and y∗ from experiments E1 and E2 (both with unknown parameter θ), have different probability models f1( . ), f2( . ), then even though f1(x∗; θ) = cf2(y∗; θ) for all θ, outcomes x∗ and y∗may have different implications for an inference about θ. Although such violations stem from considering outcomes other than the one observed, we argue, this does not require us to consider experiments other than the one performed to produce the data. David Cox [Ann. Math. Statist. 29 (1958) 357–372] proposes the Weak Conditionality Principle (WCP) to justify restricting the space of relevant repetitions. The WCP says that once it is known which Ei produced the measurement, the assessment should be in terms of the properties of Ei. The surprising upshot of Allan Birnbaum’s [J.Amer.Statist.Assoc.57(1962) 269–306] argument is that the SLP appears to follow from applying the WCP in the case of mixtures, and so uncontroversial a principle as sufficiency (SP). But this would preclude the use of sampling distributions. The goal of this article is to provide a new clarification and critique of Birnbaum’s argument. Although his argument purports that [(WCP and SP), entails SLP], we show how data may violate the SLP while holding both the WCP and SP. Such cases also refute [WCP entails SLP].

Key words: Birnbaumization, likelihood principle (weak and strong), sampling theory, sufficiency, weak conditionality

Regular readers of this blog know that the topic of the “Strong Likelihood Principle (SLP)” has come up quite frequently. Numerous informal discussions of earlier attempts to clarify where Birnbaum’s argument for the SLP goes wrong may be found on this blog. [SEE PARTIAL LIST BELOW.[i]] These mostly stem from my initial paper Mayo (2010) [ii]. I’m grateful for the feedback.

In the months since this paper has been accepted for publication, I’ve been asked, from time to time, to reflect informally on the overall journey: (1) Why was/is the Birnbaum argument so convincing for so long? (Are there points being overlooked, even now?) (2) What would Birnbaum have thought? (3) What is the likely upshot for the future of statistical foundations (if any)?

I’ll try to share some responses over the next week. (Naturally, additional questions are welcome.)

[i] A quick take on the argument may be found in the appendix to: “A Statistical Scientist Meets a Philosopher of Science: A conversation between David Cox and Deborah Mayo (as recorded, June 2011)”

- Midnight with Birnbaum (Happy New Year).

- New Version: On the Birnbaum argument for the SLP: Slides for my JSM talk.

- Don’t Birnbaumize that experiment my friend*–updated reblog.

- Allan Birnbaum, Philosophical Error Statistician: 27 May 1923 – 1 July 1976 .

- LSE seminar

- A. Birnbaum: Statistical Methods in Scientific Inference

- ReBlogging the Likelihood Principle #2: Solitary Fishing: SLP Violations

- Putting the brakes on the breakthrough: An informal look at the argument for the Likelihood Principle.

- Forthcoming paper on the strong likelihood principle.

UPhils and responses

- U-PHIL: Gandenberger & Hennig : Blogging Birnbaum’s Proof

- U-Phil: Mayo’s response to Hennig and Gandenberger

- Mark Chang (now) gets it right about circularity

- U-Phil: Ton o’ Bricks

- Blogging (flogging?) the SLP: Response to Reply- Xi’an Robert

- U-Phil: J. A. Miller: Blogging the SLP

- [ii]

- Mayo, D. G. (2010). “An Error in the Argument from Conditionality and Sufficiency to the Likelihood Principle” in Error and Inference: Recent Exchanges on Experimental Reasoning, Reliability and the Objectivity and Rationality of Science (D Mayo and A. Spanos eds.), Cambridge: Cambridge University Press: 305-14.

Allan Birnbaum, Philosophical Error Statistician: 27 May 1923 – 1 July 1976

Today is Allan Birnbaum’s Birthday. Birnbaum’s (1962) classic “On the Foundations of Statistical Inference” is in Breakthroughs in Statistics (volume I 1993). I’ve a hunch that Birnbaum would have liked my rejoinder to discussants of my forthcoming paper (Statistical Science): Bjornstad, Dawid, Evans, Fraser, Hannig, and Martin and Liu. I hadn’t realized until recently that all of this is up under “future papers” here [1]. You can find the rejoinder: STS1404-004RA0-2. That takes away some of the surprise of having it all come out at once (and in final form). For those unfamiliar with the argument, at the end of this entry are slides from a recent, entirely informal, talk that I never posted, as well as some links from this blog. Happy Birthday Birnbaum! Continue reading

Putting the brakes on the breakthrough: An informal look at the argument for the Likelihood Principle

Friday, May 2, 2014, I will attempt to present my critical analysis of the Birnbaum argument for the (strong) Likelihood Principle, so as to be accessible to a general philosophy audience (flyer below). Can it be done? I don’t know yet, this is a first. It will consist of:

- Example 1: Trying and Trying Again: Optional stopping

- Example 2: Two instruments with different precisions

[you shouldn’t get credit (or blame) for something you didn’t do] - The Breakthough: Birnbaumization

- Imaginary dialogue with Allan Birnbaum

The full paper is here. My discussion takes several pieces a reader can explore further by searching this blog (e.g., under SLP, brakes e.g., here, Birnbaum, optional stopping). I will post slides afterwards.

Midnight With Birnbaum (Happy New Year)

Just as in the past 2 years since I’ve been blogging, I revisit that spot in the road, get into a strange-looking taxi, and head to “Midnight With Birnbaum”. There are a couple of brief (12/31/13) updates at the end.

Just as in the past 2 years since I’ve been blogging, I revisit that spot in the road, get into a strange-looking taxi, and head to “Midnight With Birnbaum”. There are a couple of brief (12/31/13) updates at the end.

You know how in that (not-so) recent movie, “Midnight in Paris,” the main character (I forget who plays it, I saw it on a plane) is a writer finishing a novel, and he steps into a cab that mysteriously picks him up at midnight and transports him back in time where he gets to run his work by such famous authors as Hemingway and Virginia Wolf? He is impressed when his work earns their approval and he comes back each night in the same mysterious cab…Well, imagine an error statistical philosopher is picked up in a mysterious taxi at midnight (New Year’s Eve 2011 2012, 2013) and is taken back fifty years and, lo and behold, finds herself in the company of Allan Birnbaum.[i]

ERROR STATISTICIAN: It’s wonderful to meet you Professor Birnbaum; I’ve always been extremely impressed with the important impact your work has had on philosophical foundations of statistics. I happen to be writing on your famous argument about the likelihood principle (LP). (whispers: I can’t believe this!)

BIRNBAUM: Ultimately you know I rejected the LP as failing to control the error probabilities needed for my Confidence concept.

ERROR STATISTICIAN: Yes, but I actually don’t think your argument shows that the LP follows from such frequentist concepts as sufficiency S and the weak conditionality principle WLP.[ii] Sorry,…I know it’s famous…

BIRNBAUM: Well, I shall happily invite you to take any case that violates the LP and allow me to demonstrate that the frequentist is led to inconsistency, provided she also wishes to adhere to the WLP and sufficiency (although less than S is needed).

ERROR STATISTICIAN: Well I happen to be a frequentist (error statistical) philosopher; I have recently (2006) found a hole in your proof,..er…well I hope we can discuss it.

BIRNBAUM: Well, well, well: I’ll bet you a bottle of Elba Grease champagne that I can demonstrate it!

ERROR STATISTICAL PHILOSOPHER: It is a great drink, I must admit that: I love lemons.

BIRNBAUM: OK. (A waiter brings a bottle, they each pour a glass and resume talking). Whoever wins this little argument pays for this whole bottle of vintage Ebar or Elbow or whatever it is Grease.

ERROR STATISTICAL PHILOSOPHER: I really don’t mind paying for the bottle.

BIRNBAUM: Good, you will have to. Take any LP violation. Let x’ be 2-standard deviation difference from the null (asserting m = 0) in testing a normal mean from the fixed sample size experiment E’, say n = 100; and let x” be a 2-standard deviation difference from an optional stopping experiment E”, which happens to stop at 100. Do you agree that:

(0) For a frequentist, outcome x’ from E’ (fixed sample size) is NOT evidentially equivalent to x” from E” (optional stopping that stops at n)

ERROR STATISTICAL PHILOSOPHER: Yes, that’s a clear case where we reject the strong LP, and it makes perfect sense to distinguish their corresponding p-values (which we can write as p’ and p”, respectively). The searching in the optional stopping experiment makes the p-value quite a bit higher than with the fixed sample size. For n = 100, data x’ yields p’= ~.05; while p” is ~.3. Clearly, p’ is not equal to p”, I don’t see how you can make them equal. Continue reading

Forthcoming paper on the strong likelihood principle

My paper, “On the Birnbaum Argument for the Strong Likelihood Principle” has been accepted by Statistical Science. The latest version is here. (It differs from all versions posted anywhere). If you spot any typos, please let me know (error@vt.edu). If you can’t open this link, please write to me and I’ll send it directly. As always, comments and queries are welcome.

My paper, “On the Birnbaum Argument for the Strong Likelihood Principle” has been accepted by Statistical Science. The latest version is here. (It differs from all versions posted anywhere). If you spot any typos, please let me know (error@vt.edu). If you can’t open this link, please write to me and I’ll send it directly. As always, comments and queries are welcome.

I appreciate considerable feedback on SLP on this blog. Interested readers may search this blog for quite a lot of discussion of the SLP (e.g., here and here) including links to the central papers, “U-Phils” (commentaries) by others (e.g., here, here, and here), and amusing notes (e.g., Don’t Birnbaumize that experiment my friend, and Midnight with Birnbaum), and more…..

Abstract: An essential component of inference based on familiar frequentist notions, such as p-values, significance and confidence levels, is the relevant sampling distribution. This feature results in violations of a principle known as the strong likelihood principle (SLP), the focus of this paper. In particular, if outcomes x∗ and y∗ from experiments E1 and E2 (both with unknown parameter θ), have different probability models f1( . ), f2( . ), then even though f1(x∗; θ) = cf2(y∗; θ) for all θ, outcomes x∗ and y∗ may have different implications for an inference about θ. Although such violations stem from considering outcomes other than the one observed, we argue, this does not require us to consider experiments other than the one performed to produce the data. David Cox (1958) proposes the Weak Conditionality Principle (WCP) to justify restricting the space of relevant repetitions. The WCP says that once it is known which Ei produced the measurement, the assessment should be in terms of the properties of Ei. The surprising upshot of Allan Birnbaum’s (1962) argument is that the SLP appears to follow from applying the WCP in the case of mixtures, and so uncontroversial a principle as sufficiency (SP). But this would preclude the use of sampling distributions. The goal of this article is to provide a new clarification and critique of Birnbaum’s argument. Although his argument purports that [(WCP and SP), entails SLP], we show how data may violate the SLP while holding both the WCP and SP. Such cases also refute [WCP entails SLP].

Key words: Birnbaumization, likelihood principle (weak and strong), sampling theory, sufficiency, weak conditionality

Gandenberger: How to Do Philosophy That Matters (guest post)

Greg Gandenberger

Greg Gandenberger

Philosopher of Science

University of Pittsburgh

gandenberger.org

Genuine philosophical problems are always rooted in urgent problems outside philosophy,

and they die if these roots decay

Karl Popper (1963, 72)

My concern in this post is how we philosophers can use our skills to do work that matters to people both inside and outside of philosophy.

Philosophers are highly skilled at conceptual analysis, in which one takes an interesting but unclear concept and attempts to state precisely when it applies and when it doesn’t.

What is the point of this activity? In many cases, this question has no satisfactory answer. Conceptual analysis becomes an end in itself, and philosophical debates become fruitless arguments about words. The pleasure we philosophers take in such arguments hardly warrants scarce government and university resources. It does provide good training in critical thinking, but so do many other activities that are also immediately useful, such as doing science and programming computers.

Conceptual analysis does not have to be pointless. It is often prompted by a real-world problem. In Plato’s Euthyphro, for instance, the character Euthyphro thought that piety required him to prosecute his father for murder. His family thought on the contrary that for a son to prosecute his own father was the height of impiety. In this situation, the question “what is piety?” took on great urgency. It also had great urgency for Socrates, who was awaiting trial for corrupting the youth of Athens.

In general, conceptual analysis often begins as a response to some question about how we ought to regulate our beliefs or actions. It can be a fruitful activity as long as the questions that prompted it are kept in view. It tends to degenerate into merely verbal disputes when it becomes an end in itself.

The kind of goal-oriented view of conceptual analysis I aim to articulate and promote is not teleosemantics: it is a view about how philosophy should be done rather than a theory of meaning. It is consistent with Carnap’s notion of explication (one of the desiderata of which is fruitfulness) (Carnap 1963, 5), but in practice Carnapian explication seems to devolve into idle word games just as easily as conceptual analysis. Our overriding goal should not be fidelity to intuitions, precision, or systematicity, but usefulness.

How I Became Suspicious of Conceptual Analysis

When I began working on proofs of the Likelihood Principle, I assumed that following my intuitions about the concept of “evidential equivalence” would lead to insights about how science should be done. Birnbaum’s proof showed me that my intuitions entail the Likelihood Principle, which frequentist methods violate. Voila! Voila! Scientists shouldn’t use frequentist methods. All that remained to be done was to fortify Birnbaum’s proof, as I do in “A New Proof of the Likelihood Principle” by defending it against objections and buttressing it with an alternative proof. [Editor: For a number of related materials on this blog see Mayo’s JSM presentation, and note [i].]

After working on this topic for some time, I realized that I was making simplistic assumptions about the relationship between conceptual intuitions and methodological norms. At most, a proof of the Likelihood Principle can show you that frequentist methods run contrary to your intuitions about evidential equivalence. Even if those intuitions are true, it does not follow immediately that scientists should not use frequentist methods. The ultimate aim of science, presumably, is not to respect evidential equivalence but (roughly) to learn about the world and make it better. The demand that scientists use methods that respect evidential equivalence is warranted only insofar as it is conducive to achieving those ends. Birnbaum’s proof says nothing about that issue.

- In general, a conceptual analysis–even of a normatively freighted term like “evidence”–is never enough by itself to justify a normative claim. The questions that ultimately matter are not about “what we mean” when we use particular words and phrases, but rather about what our aims are and how we can best achieve them.

How to Do Conceptual Analysis Teleologically

This is not to say that my work on the Likelihood Principle or conceptual analysis in general is without value. But it is nothing more than a kind of careful lexicography. This kind of work is potentially useful for clarifying normative claims with the aim of assessing and possibly implementing them. To do work that matters, philosophers engaged in conceptual analysis need to take enough interest in the assessment and implementation stages to do their conceptual analysis with the relevant normative claims in mind.

So what does this kind of teleological (goal-oriented) conceptual analysis look like?

It can involve personally following through on the process of assessing and implementing the relevant norms. For example, philosophers at Carnegie Mellon University working on causation have not only provided a kind of analysis of the concept of causation but also developed algorithms for causal discovery, proved theorems about those algorithms, and applied those algorithms to contemporary scientific problems (see e.g. Spirtes et al. 2000).

I have great respect for this work. But doing conceptual analysis does not have to mean going so far outside the traditional bounds of philosophy. A perfect example is James Woodward’s related work on causal explanation, which he describes as follows (2003, 7-8, original emphasis):

My project…makes recommendations about what one ought to mean by various causal and explanatory claims, rather than just attempting to describe how we use those claims. It recognizes that causal and explanatory claims sometimes are confused, unclear, and ambiguous and suggests how those limitations might be addressed…. we introduce concepts…and characterize them in certain ways…because we want to do things with them…. Concepts can be well or badly designed for such purposes, and we can evaluate them accordingly.

Woodward keeps his eye on what the notion of causation is for, namely distinguishing between relationships that do and relationships that do not remain invariant under interventions. This distinction is enormously important because only relationships that remain invariant under interventions provide “handles” we can use to change the world.

Here are some lessons about teleological conceptual analysis that we can take from Woodward’s work. (I’m sure this list could be expanded.)

- Teleological conceptual analysis puts us in charge. In his wonderful presidential address at the 2012 meeting of the Philosophy of Science Association, Woodward ended a litany of metaphysical arguments against regarding mental events as causes by asking “Who’s in charge here?” There is no ideal form of Causation to which we must answer. We are free to decide to use “causation” and related words in the ways that best serve our interests.

- Teleological conceptual analysis can be revisionary. If ordinary usage is not optimal, we can change it.

- The product of a teleological conceptual analysis need not be unique. Some philosophers reject Woodward’s account because they regard causation as a process rather than as a relationship among variables. But why do we need to choose? There could just be two different notions of causation. Woodward’s account captures one notion that is very important in science and everyday life. If it captures all of the causal notions that are important, then so much the better. But this kind of comprehensiveness is not essential.

- Teleological conceptual analysis can be non-reductive. Woodward characterizes causal relations as (roughly) correlation relations that are invariant under certain kinds of interventions. But the notion of an intervention is itself causal. Woodward’s account is not circular because it characterizes what it means for a causal relationship to hold between two variables in terms of a different causal processes involving different sets of variables. But it is non-reductive in the sense that does not allow us to replace causal claims with equivalent non-causal claims (as, e.g., counterfactual, regularity, probabilistic, and process theories purport to do). This fact is a problem if one’s primary concern is to reduce one’s ultimate metaphysical commitments, but it is not necessarily a problem if one’s primary concern is to improve our ability to assess and use causal claims.

Conclusion

Philosophers rarely succeed in capturing all of our intuitions about an important informal concept. Even if they did succeed, they would have more work to do in justifying any norms that invoke that concept. Conceptual analysis can be a first step toward doing philosophy that matters, but it needs to be undertaken with the relevant normative claims in mind.

Question: What are your best examples of philosophy that matters? What can we learn from them?

Citations

- Birnbaum, Allan. “On the Foundations of Statistical Inference.” Journal of the American Statistical Association 57.298 (1962): 269-306.

- Carnap, Rudolf. Logical Foundations of Probability. U of Chicago Press, 1963.

- Gandenberger, Greg. “A New Proof of the Likelihood Principle.” The British Journal for the Philosophy of Science (forthcoming).

- Plato. Euthyphro. http://classics.mit.edu/Plato/euthyfro.html.

- Popper, Karl. Conjectures and Refutations. London: Routledge & Kegan Paul, 1963.

- Spirtes, Peter, Clark Glymour, and Richard Scheines. Causation, Prediction, and Search. Vol. 81. The MIT Press, 2000.

- Woodward, James. Making Things Happen: A Theory of Causal Explanation. Oxford University Press, 2003.

[i] Earlier posts are here and here. Some U-Phils are here, here, and here. For some amusing notes (e.g., Don’t Birnbaumize that experiment my friend, and Midnight with Birnbaum).

Some related papers:

- Mayo, D. G. (2012). “Statistical Science Meets Philosophy of Science Part 2: Shallow versus Deep Explorations”, Rationality, Markets, and Morals (RMM) 3, Special Topic: Statistical Science and Philosophy of Science, 71–107.

- Mayo, D. G. (2011) “Statistical Science and Philosophy of Science: Where Do/Should They Meet in 2011 (and beyond).” Rationality, Markets and Morals (RMM) 2, Special Topic: Statistical Science and Philosophy of Science, 79–102.

- Mayo, D. G. (2010). “An Error in the Argument from Conditionality and Sufficiency to the Likelihood Principle” in Error and Inference: Recent Exchanges on Experimental Reasoning, Reliability and the Objectivity and Rationality of Science (D Mayo and A. Spanos eds.), Cambridge: Cambridge University Press: 305-14.

- Cox D. R. and Mayo. D. G. (2010). “Objectivity and Conditionality in Frequentist Inference” in Error and Inference: Recent Exchanges on Experimental Reasoning, Reliability and the Objectivity and Rationality of Science (D Mayo and A. Spanos eds.), Cambridge: Cambridge University Press: 276-304.