An essential component of inference based on familiar frequentist notions: p-values, significance and confidence levels, is the relevant sampling distribution (hence the term sampling theory, or my preferred error statistics, as we get error probabilities from the sampling distribution). This feature results in violations of a principle known as the strong likelihood principle (SLP). To state the SLP roughly, it asserts that all the evidential import in the data (for parametric inference within a model) resides in the likelihoods. If accepted, it would render error probabilities irrelevant post data.

SLP (We often drop the “strong” and just call it the LP. The “weak” LP just boils down to sufficiency)

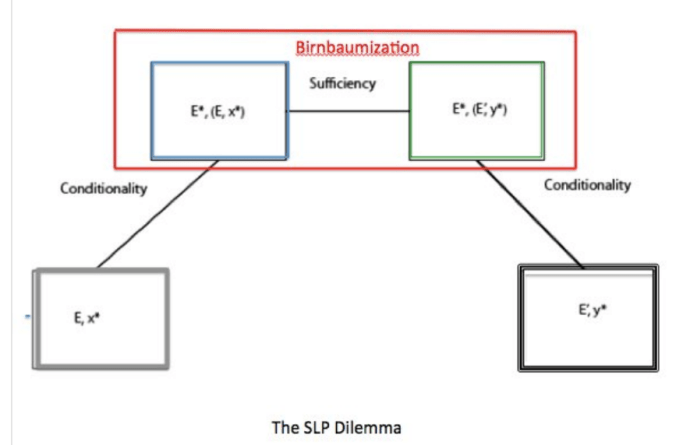

For any two experiments E1 and E2 with different probability models f1, f2, but with the same unknown parameter θ, if outcomes x* and y* (from E1 and E2 respectively) determine the same (i.e., proportional) likelihood function (f1(x*; θ) = cf2(y*; θ) for all θ), then x* and y* are inferentially equivalent (for an inference about θ).

(What differentiates the weak and the strong LP is that the weak refers to a single experiment.)

Violation of SLP:

Whenever outcomes x* and y* from experiments E1 and E2 with different probability models f1, f2, but with the same unknown parameter θ, and f1(x*; θ) = cf2(y*; θ) for all θ, and yet outcomes x* and y* have different implications for an inference about θ.

For an example of a SLP violation, E1 might be sampling from a Normal distribution with a fixed sample size n, and E2 the corresponding experiment that uses an optional stopping rule: keep sampling until you obtain a result 2 standard deviations away from a null hypothesis that θ = 0 (and for simplicity, a known standard deviation). When you do, stop and reject the point null (in 2-sided testing).

The SLP tells us (in relation to the optional stopping rule) that once you have observed a 2-standard deviation result, there should be no evidential difference between its having arisen from experiment E1 , where n was fixed, say, at 100, and experiment E2 where the stopping rule happens to stop at n = 100. For the error statistician, by contrast, there is a difference, and this constitutes a violation of the SLP.

———————-

Now for the surprising part: Remember the 60-year old chestnut from my last post where a coin is flipped to decide which of two experiments to perform? David Cox (1958) proposes something called the Weak Conditionality Principle (WCP) to restrict the space of relevant repetitions for frequentist inference. The WCP says that once it is known which Ei produced the measurement, the assessment should be in terms of the properties of the particular Ei. Nothing could be more obvious.

The surprising upshot of Allan Birnbaum’s (1962) argument is that the SLP appears to follow from applying the WCP in the case of mixtures, and so uncontroversial a principle as sufficiency (SP). But this would preclude the use of sampling distributions. J. Savage calls Birnbaum’s argument “a landmark in statistics” (see [i]).

Although his argument purports that [(WCP and SP) entails SLP], I show how data may violate the SLP while holding both the WCP and SP. Such cases directly refute [WCP entails SLP].

In Birnbaum’s argument, he introduces an informal, and rather vague, notion of the “evidence (or evidential meaning) of an outcome z from experiment E”. He writes it: Ev(E,z).

In my formulation of the argument, I introduce a new symbol to represent a function from a given experiment-outcome pair, (E,z) to a generic inference implication. It (hopefully) lets us be clearer than does Ev.

(E,z) InfrE(z) is to be read “the inference implication from outcome z in experiment E” (according to whatever inference type/school is being discussed).

An outline of my argument is in the slides for a talk below:

Binge reading the Likelihood Principle.

If you’re keen to binge read the SLP–a way to break holiday/winter break doldrums– I’ve pasted most of the early historical sources before the slides. The argument is simple; showing what’s wrong with it took a long time. My earliest treatment, via counterexample, is in Mayo (2010). A deeper argument is in Mayo (2014) in Statistical Science.[ii] An intermediate paper Mayo (2013) corresponds to the slides below–they were presented at the JSM. Interested readers may search this blog for quite a lot of discussion of the SLP including “U-Phils” (discussions by readers) (e.g., here, and here), and amusing notes (e.g., Don’t Birnbaumize that experiment my friend, and Midnight with Birnbaum).

You may not wish to engage in what looks to be (and is) a rather convoluted logical argument. That’s fine, but just remember that when someone says “it’s been proved mathematically” that error probabilities are irrelevant to evidence post data, you can say, “I read somewhere that this has been disproved”.

—–

[i] Savage on Birnbaum: “This paper is a landmark in statistics. . . . I, myself, like other Bayesian statisticians, have been convinced of the truth of the likelihood principle for a long time. Its consequences for statistics are very great. . . . [T]his paper is really momentous in the history of statistics. It would be hard to point to even a handful of comparable events. …once the likelihood principle is widely recognized, people will not long stop at that halfway house but will go forward and accept the implications of personalistic probability for statistics” (Savage 1962, 307-308).

The argument purports to follow from principles frequentist error statisticians accept.

[ii] The link includes comments on my paper by Bjornstad, Dawid, Evans, Fraser, Hannig, and Martin and Liu, and my rejoinder.

Birnbaum Papers:

- Birnbaum, A. (1962), “On the Foundations of Statistical Inference“, Journal of the American Statistical Association 57(298), 269-306.

- Savage, L. J., Barnard, G., Cornfield, J., Bross, I, Box, G., Good, I., Lindley, D., Clunies-Ross, C., Pratt, J., Levene, H., Goldman, T., Dempster, A., Kempthorne, O, and Birnbaum, A. (1962). “Discussion on Birnbaum’s On the Foundations of Statistical Inference”, Journal of the American Statistical Association 57(298), 307-326.

- Birnbaum, A (1970). Statistical Methods in Scientific Inference(letter to the editor). Nature 225, 1033.

- Birnbaum, A (1972), “More on Concepts of Statistical Evidence“, Journal of the American Statistical Association, 67(340), 858-861.

Note to Reader: If you look at the “discussion”, you can already see Birnbaum backtracking a bit, in response to Pratt’s comments.

Some additional early discussion papers:

Durbin:

- Durbin, J. (1970), “On Birnbaum’s Theorem on the Relation Between Sufficiency, Conditionality and Likelihood”, Journal of the American Statistical Association, Vol. 65, No. 329 (Mar., 1970), pp. 395-398.

- Savage, L. J., (1970), “Comments on a Weakened Principle of Conditionality”, Journal of the American Statistical Association, Vol. 65, No. 329 (Mar., 1970), pp. 399-401.

- Birnbaum, A. (1970), “On Durbin’s Modified Principle of Conditionality”, Journal of the American Statistical Association, Vol. 65, No. 329 (Mar., 1970), pp. 402-403.

There’s also a good discussion in Cox and Hinkley 1974.

Evans, Fraser, and Monette:

- Evans, M., Fraser, D.A., and Monette, G., (1986), “On Principles and Arguments to Likelihood.” The Canadian Journal of Statistics 14: 181-199.

Kalbfleisch:

- Kalbfleisch, J. D. (1975), “Sufficiency and Conditionality”, Biometrika, Vol. 62, No. 2 (Aug., 1975), pp. 251-259.

- Barnard, G. A., (1975), “Comments on Paper by J. D. Kalbfleisch”, Biometrika, Vol. 62, No. 2 (Aug., 1975), pp. 260-261.

- Barndorff-Nielsen, O. (1975), “Comments on Paper by J. D. Kalbfleisch”, Biometrika, Vol. 62, No. 2 (Aug., 1975), pp. 261-262.

- Birnbaum, A. (1975), “Comments on Paper by J. D. Kalbfleisch”, Biometrika, Vol. 62, No. 2 (Aug., 1975), pp. 262-264.

- Kalbfleisch, J. D. (1975), “Reply to Comments”, Biometrika, Vol. 62, No. 2 (Aug., 1975), p. 268.

My discussions:

- Mayo, D. G. (2010). “An Error in the Argument from Conditionality and Sufficiency to the Likelihood Principle” in Error and Inference: Recent Exchanges on Experimental Reasoning, Reliability and the Objectivity and Rationality of Science (D Mayo and A. Spanos eds.), Cambridge: Cambridge University Press: 305-14.

- Mayo, D. G. (2013) “Presented Version: On the Birnbaum Argument for the Strong Likelihood Principle”, in JSM Proceedings, Section on Bayesian Statistical Science. Alexandria, VA: American Statistical Association: 440-453.

- Mayo, D. G. (2014). Mayo paper: “On the Birnbaum Argument for the Strong Likelihood Principle,” Paper with discussion and Mayo rejoinder: Statistical Science 29(2) pp. 227-239, 261-266.

Some content on this page was disabled on September 23, 2022 as a result of a DMCA takedown notice from Ithaka. You can learn more about the DMCA here:

I’ve added a diagram, obtained from Christian Robert’s blog, from when we discussed this puzzle some years ago. In a nutshell, if you’re inside the red box, you can’t be outside the red box. The”proof” has contradictory premises. Is anybody out there following me?

Pingback: Midnight With Birnbaum (Happy New Year 2019)! | Error Statistics Philosophy