After Jon Williamson’s talk, Objective Bayesianism from a Philosophical Perspective, at the PhilStat forum on May 22, I raised some general “casualties” encountered by objective, non-subjective or default Bayesian accounts, not necessarily Williamson’s. I am pasting those remarks below, followed by some additional remarks and the video of his responses to my main kvetches.

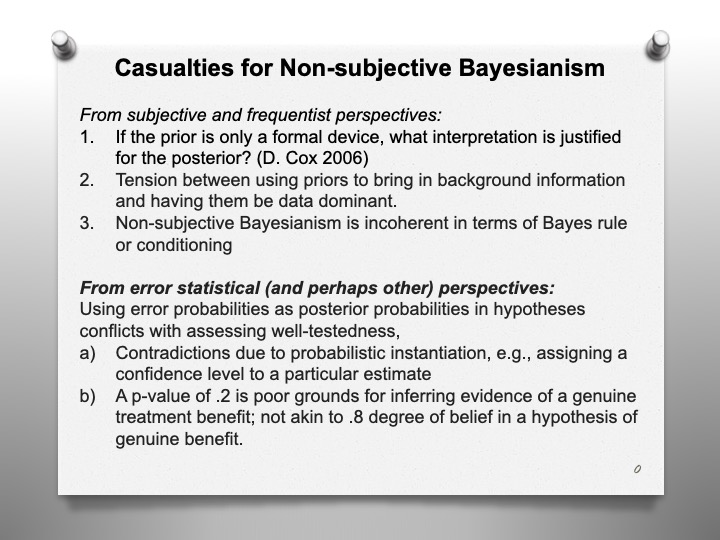

To consider some casualties, some object that the Bayesian picture of a coherent way to represent and update beliefs goes by the board by the non-subjective or default Bayesian.

The non-subjective priors are not supposed to be considered expressions of uncertainty, ignorance, or degree of belief. They may not even be probabilities, being improper, that is not integrating [to] one. As David Cox asks: as an adequate summary of information?” (2006a, p. 774)

A second casualty is that prior probabilities are often touted a way that Bayesians to let us bring background information into the analysis, but this pulls in the opposite direction from the goal of the default prior which aims to maximize the contribution of just the data, not background. Trying to describe your beliefs is different from trying to make the data dominant. Users applying a computer package with default priors, might think they’re putting in something uninformative or safe. Not necessarily.

The goal of truly “ uninformative” priors has been abandoned around 20 years ago–what is uninformative under one parameterization can be informative for another.

Third casualty, especially from the subjective Bayesian perspective, is that non-subjective Bayesianism is incoherent in terms of Bayes rule or conditioning—as Jon knows. One reason for incoherence is that the default prior on the same hypothesis can vary according to the type of experiment performed. This violates the LP followed by strict Bayes rule—to mention a term we’ve spoken of before in this forum.

When there’s a conflict with Bayes’ Rule, or in the face of improper posteriors (that don’t sum to 1) the default Bayesian reassign priors, starting over again, as it were. The very idea that inference is a matter of continually updating by Bayesian conditioning goes by the board, at least in those cases.

There are also casualties that emerge from the perspective of the frequentist error statistician.

Yes, the non-subjective Bayesian may allow the error probability measure to match a posterior. But do we want that?

One way allows assigning a confidence level to the particular inferred estimate. But save for special cases, this leads to contradictions, as with Fisher’s attempt at fiducial intervals. To a frequentist it’s a fallacy to instantiate the probability this way. (We might for example have two non-overlapping CIs both at level .95).

Nor would the error statistician want a p-value of .2 in testing a null hypothesis of no benefit of a treatment say to be taken as .8 degree of plausibility in the treatment’s benefits, or to say they’d be as ready to bet on the alternative, as they would the occurrence of an event with probability 0.8. A severe tester would regard the claim of a genuine positive benefit as poorly tested, from a significance level of .2.

In her view, the error probability can be seen to be applied to the particular inference by telling us how well or poorly tested the claim.

The error statistician operates without an exhaustive set of models, theories, languages and predicate. She will split off a single piece, question or model, with its error properties assessed and controlled.

Having said all that, Williamson has his own ingenious system, and I’m interested to consider how it rules on some of today’s controversies.

Jon is free to react to any of this, or perhaps they will arise later in conversation.

New remarks by Mayo: Williamson, in his response (below) agrees with my caveats relating to non-subjective Bayesianism, but avers that they only give evidence in support of his (non-standard) approach. Start with casualty #3. Williamson bites the bullet here, because he has long rejected the what many Bayesians consider a great asset: the view that learning from data is all about Bayesian updating (applications of Bayes rule or conditionalization). However, the example he used in this presentation is different from the ones usually used in showing temporal incoherence, and I’ll raise some questions about it in a follow-up post on this blog. As for casualty #1, I can’t say whether his Bayesian posteriors provide appropriate degrees of rational belief, as I’m not sure how to attain them.

One example he gave was moving from k% of observed A’s have been B’s to a degree of belief equal to k that a randomly selected A will be a B. This is essentially Carnaps “straight rule of induction. But the randomness assumption (if I’m right that he uses it) makes the assessment a model-based one whereas the kind of Carnapian-style inductive logic that Williamson wants to use, I think, does not use models, since otherwise there is a problem with taking it to solve problems of induction. (He will correct me if I’m wrong.) The other example he gives takes confidence levels as rational degrees of belief on particular estimates. But this takes us to my casualty regarding contradictions resulting from such probabilistic instantiations. Perhaps he wants to argue that assigning the confidence level to the particular instance is OK for the Bernouilli parameter p–the probability of success on a given trial. (This may be akin to the approach of Roger Rosenkrantz who, like Williamson, is a Jaynesian.) But this requires an appeal to a model and not merely a first order language. And I don’t see how to avoid the reference class problem, except maybe to say, whatever reference class you’re interested in is fine for applying the straight rule. But this would be in tension with the main rationale of the project being to solve induction.[i]

J. Williamson response to Casualties video

J. Williamson’s “Objective Bayesianism from a Philosophical Perspective” Slides.

His full talk is in this Presentation Video.

Please share your comments and questions in the comments to this blog.

[i] For how the error statistical philosopher recommends we solve the problem of induction now, see pp 107-115 of Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (CUP 2018): https://errorstatistics.com/wp-content/uploads/2019/09/ex2-tii.pdf

Very interesting – my main question/concern is how would one know if the Bayesian net at the end of the talk started to fail? I can accept that you would use a decision rule to manage each node (choose the confidence level).

But if one of these CI’s would fail an audit for some reason, is there any way to see that in the data? Is there something like a sampling distribution for the output of the net under good conditions? Is there a way to falsify the system?

Pingback: Bayesian philosophers vs Bayesian statisticians: Remarks on Jon Williamson | Error Statistics Philosophy

I remember that when I attended the Bayesian & Statistics conference organised by Howson and Urbach at the LSE in the early 1990s the statisticians seems to get on better with each other than the philosophers. My memory is that Colin Howson objected to the ecumenical spirit that was abroad saying that two pure positions were being discussed and they could not both be right.

I think that the position of most of the statisticians present would have been that both could, however, be wrong and probably were. In practice statisticians seem to find that statistical inference for any genuine applied problem soon gets very dirty. Consider, for instance, the problem of estimating the effect of a treatment in a clinical trial. Should we estimate it overall or separately for men and women? It might seem that, since we will never treat a patient of average sex, the latter is more appropriate but, in fact, it is not always better and if adopted leads to a problem of infinite regress: we shall also know, for example not only the patient’s sex but their age, height weight etc. This raises issues that have to be addressed in both Bayesian and frequentist frameworks. Basically a bias-variance trade-off is involved. It can be handled by using informative priors in the Bayesian approach or an appeal to mean square error in the frequentist approach. Both of these have their difficulty.

It may be that the inferential cats that philsophers discuss have very different colouring to those that statisticians do but all that statisticians tend to care about is “do they catch mice?”

Thanks very much for your thoughts on this Deborah.

I think you are right that this approach to induction is not what Carnap envisaged: he wanted an account in which everything was built in to the logic, whereas my approach appeals directly to statistical inference. I don’t think Carnap was successful, however. His theory of inductive logic faces some intractable problems (see e.g., chapter 4 of my “Lectures on inductive logic”) and in any case, choice of his key parameter, lambda, needs to be made at least partly on empirical grounds, and so his approach is not purely logical.

I also agree that the reference class problem needs to be tackled by this sort of approach. I don’t think there is a fully general, off-the-shelf solution to this problem. As Stephen pointed out, estimates of a frequency in a narrower reference class tends to be based on less data, and so an interval estimate will be wider. I think that the question of which estimate to use is partly one of personal judgement, dependant on the uses to which it is put (and the agent’s utilities attached to the outcomes of these uses). Hence the need for a thoroughly Bayesian account, which can factor in these utilities and can leave room for personal judgement. As I mentioned in the talk, I’m not the sort of objective Bayesian who thinks that inferences are always uniquely determined by evidence.