I was part of something called “a brains blog roundtable” on the business of p-values earlier this week–I’m glad to see philosophers getting involved.

Next week I’ll be in a session that I think is intended to explain what’s right about P-values at an ASA Symposium on Statistical Inference : “A World Beyond p < .05”.

Our session, “What are the best uses for P-values?“, I take it, will discuss the value of frequentist (error statistical) testing more generally, as is appropriate. (Aside from me, it includes Yoav Benjamini and David Robinson.)

Our session, “What are the best uses for P-values?“, I take it, will discuss the value of frequentist (error statistical) testing more generally, as is appropriate. (Aside from me, it includes Yoav Benjamini and David Robinson.)

One of the more baffling things about today’s statistics wars is their tendency to readily undermine themselves. One example is how they lead to relinquishing the strongest criticisms against findings that have been based on fishing expeditions. In the interest of promoting a statistical account that downplays error probabilities (Bayes Factors), it becomes difficult to lambast a researcher for engaging in practices on grounds that they violate error probabilities (cherry-picking, multiple testing, trying and trying again, post-data selection effects). You can’t condemn a researcher for engaging in practices on grounds that they violate error probabilities, if you reject error probabilities. The direct criticism has been lost. The criticism is redirected to finding the cherry picked hypothesis improbable. But now the cherry picker is free to discount the criticism as something a Bayesian can always do to counter a statistically significant finding–and they do this very effectively![1] Moreover, cherry picked hypotheses are often believable, that’s what makes things like post-data subgroups so seductive. Finally, in an adequate account, the improbability of a claim must be distinguished from its having been poorly tested. (You need to be able to say things like, “it’s plausible, but that’s a lousy test of it.”)

Now I realize error probabilities are often criticized as relevant only for long-run error control, but that’s a mistake. Ask yourself: what bothers you when cherry pickers selectively report favorable findings, and then claim to have good evidence of an effect? You’re not concerned that making a habit out of this would yield poor long-run performance–even though it would. What bothers you, and rightly so, is they haven’t done a good job in ruling out spurious findings in the case at hand. You need a principle to explain this epistemological standpoint–something frequentists have only hinted at. To state it informally:

I haven’t been given evidence for a claim C by dint of a method that had little if any capability to reveal specific flaws in C, even if they are present.

It’s a minimal requirement for evidence; I call it the severity requirement, but it doesn’t matter what its called. The stronger form says:

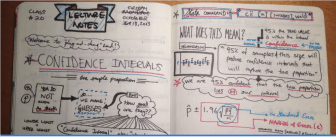

Data provide evidence for C only to the extent C has been subjected to and passes a reasonably severe test–one that probably would have found flaws in C, if present.

To many, “a world beyond p <.05” suggests a world without error statistical testing. So if you’re to use such a test in the best way, you’ll need to say why. Why use a statistical test of significance for your context and problem rather than some other tool? What type of context would ever make statistical (significance) testing just the ticket? Since the right use of these methods cannot be divorced from responding to expected critical challenges, I’ve decided to focus my remarks on giving those responses. However, I’m still working on it. Feel free to share thoughts.

Anyone who follows this blog knows I’ve been speaking about these things for donkey’s years–search terms of interest on this blog. I’ve also just completed a book, Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (CUP 2018).

[1] The inference to denying the weight of the finding lacks severity; it can readily be launched by simply giving high enough prior weight to the null hypothesis.

Mayo, D. 2018, Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (Cambridge, 2018)

Statisticians, editors and referees have done a poor job of calling out multiple testing and multiple modeling junk. p-values work if done within the context of hard science. Any that would like to move to something else, need to explain where supervision will come from.

The confusions about statistical reasoning continue to shock me, especially after Cox and so many others straightened these things out years ago. For example, we hear that p-values reject null hypotheses on grounds of improbable data! That’s false. any data set, described in sufficient detail will be overwhelmingly improbable. Bayesians seek priors, error statisticians use tail areas and error probabilities. The reasoning is statistical modes tollens, the only falsification you’ll get outside of “all swans are white” in a finite population of swans. Were H0 a reasonable description of the process, then with very high probability the test would not produce results as statistically significant as these.

Of course Fisher required more than an isolated result:

“[W]e need, not an isolated record, but a reliable method of procedure. In relation to the test of significance, we may say that a phenomenon is experimentally demonstrable when we know how to conduct an experiment which will rarely fail to give us a statistically significant result.” (Fisher 1947, p. 14)

Its highly probable you’d not keep finding all of these well-calibrated scales showing my weight gain, were they all artifacts of several well-calibrated scales (which weigh objects of known weight reliably.) My weight gain in genuine, unfortunately.

Anyone who rules out this type of reasoning is ruling out falsification in science–the basis for discovering real effects. Of course, identifying their cause is a distinct step.

As for multiple testing, cherry-picking and the like, the favorite methods do not pick up on them. If you condition on the data, you cannot pick up on what alters the sample space. And even default Bayesians who find themselves forced to violate the LP do it grudgingly, and still do not obtain assessments of what has been well tested. (No posteriors provide this.)

So here I am at the a world beyond we know not what. Everything starts at 7:30 am, but I’m not an early person. Shall I drag myself up to hear Goodman and Ioannidis? Who made them kings of the world anyway?