Ship Statinfasst

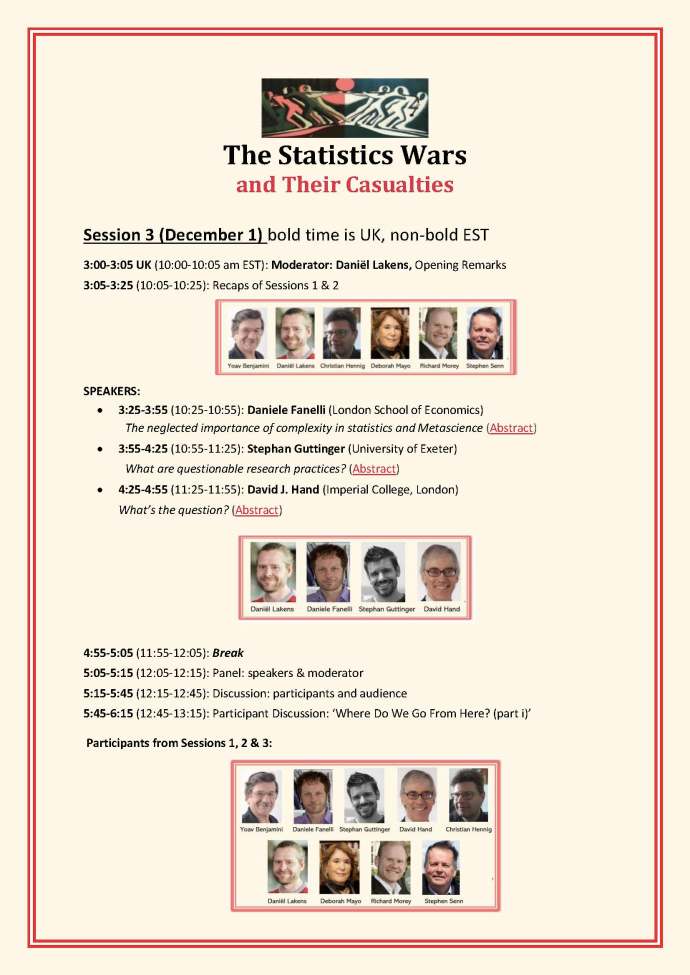

We’re embarking on a leisurely cruise through the highlights of Statistical Inference as Severe Testing [SIST]: How to Get Beyond the Statistics Wars (CUP 2018) this fall (Oct-Jan), following the 5 seminars I led for a 2020 London School of Economics (LSE) Graduate Research Seminar. It had to be run online due to Covid (as were the workshops that followed). Unlike last fall, this time I will include some zoom meetings on the material, as well as new papers and topics of interest to attendees. In this relaxed (self-paced) journey, excursions that had been covered in a week, will be spread out over a month [i] and I’ll be posting abbreviated excerpts on this blog. Look for the posts marked with the picture of ship StatInfAsSt. [ii] Continue reading

I will be giving an online talk on Friday, Feb 2, 4:30-5:45 NYC time, at a conference you can watch on zoom this week (Jan 30-Feb 2): Is Philosophy Useful for Science, and/or Vice Versa? It’s taking place in-person and online at Chapman University. My talk is:

I will be giving an online talk on Friday, Feb 2, 4:30-5:45 NYC time, at a conference you can watch on zoom this week (Jan 30-Feb 2): Is Philosophy Useful for Science, and/or Vice Versa? It’s taking place in-person and online at Chapman University. My talk is: