FURTHER UPDATED: New course for Spring 2014: Thurs 3:30-6:15 (Randolph 209)

first installment 6334 syllabus_SYLLABUS (first) Phil 6334: Philosophy of Statistical Inference and Modeling

D. Mayo and A. Spanos

Contact: error@vt.edu

This new course, to be jointly taught by Professors D. Mayo (Philosophy) and A. Spanos (Economics) will provide an introductory, in-depth introduction to graduate level research in philosophy of inductive-statistical inference and probabilistic methods of evidence (a branch of formal epistemology). We explore philosophical problems of confirmation and induction, the philosophy and history of frequentist and Bayesian approaches, and key foundational controversies surrounding tools of statistical data analytics, modeling and hypothesis testing in the natural and social sciences, and in evidence-based policy.

We now have some tentative topics and dates:

| 1. 1/23 | Introduction to the Course: 4 waves of controversy in the philosophy of statistics |

| 2. 1/30 | How to tell what’s true about statistical inference: Probabilism, performance and probativeness |

| 3. 2/6 | Induction and Confirmation: Formal Epistemology |

| 4. 2/13 | Induction, falsification, severe tests: Popper and Beyond |

| 5. 2/20 | Statistical models and estimation: the Basics |

| 6. 2/27 | Fundamentals of significance tests and severe testing |

| 7. 3/6 | Five sigma and the Higgs Boson discovery Is it “bad science”? |

| SPRING BREAK Statistical Exercises While Sunning | |

| 8. 3/20 | Fraudbusting and Scapegoating: Replicability and big data: are most scientific results false? |

| 9. 3/27 | How can we test the assumptions of statistical models? All models are false; no methods are objective: Philosophical problems of misspecification testing: Spanos method |

| 10. 4/3 | Fundamentals of Statistical Testing: Family Feuds and 70 years of controversy |

| 11. 4/10 | Error Statistical Philosophy: Highly Probable vs Highly Probed Some howlers of testing |

| 12. 4/17 | What ever happened to Bayesian Philosophical Foundations? Dutch books etc. Fundamental of Bayesian statistics |

| 13. 4/24 | Bayesian-frequentist reconciliations, unifications, and O-Bayesians |

| 14. 5/1 | Overview: Answering the critics: Should statistical philosophy be divorced from methodology? |

| (15. TBA) | Topic to be chosen (Resampling statistics and new journal policies? Likelihood principle) |

Interested in attending? E.R.R.O.R.S.* can fund travel (presumably driving) and provide accommodation for Thurs. night in a conference lodge in Blacksburg for a few people through (or part of) the semester. If interested, write ASAP for details (with a brief description of your interest and background) to error@vt.edu. (Several people asked about long-distance hook-ups: We will try to provide some sessions by Skype, and will put each of the seminar items here (also check the Phil6334 page on this blog).

A sample of questions we consider*:

- What makes an inquiry scientific? objective? When are we warranted in generalizing from data?

- What is the “traditional problem of induction”? Is it really insoluble? Does it matter in practice?

- What is the role of probability in uncertain inference? (to assign degrees of confirmation or belief? to characterize the reliability of test procedures?) 3P’s: Probabilism, performance and probativeness

- What is probability? Random variables? Estimates? What is the relevance of long-run error probabilities for inductive inference in science?

- What did Popper really say about severe testing, induction, falsification? Is it time for a new definition of pseudoscience?

- Confirmation and falsification: Carnap and Popper, paradoxes of confirmation; contemporary formal epistemology

- What is the current state of play in the “statistical wars” e.g., between frequentists, likelihoodists, and (subjective vs. “non-subjective”) Bayesians?

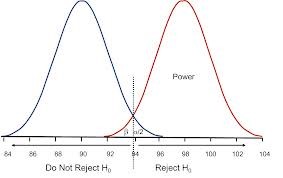

- How should one specify and interpret p-values, type I and II errors, confidence levels? Can one tell the truth (and avoid fallacies) with statistics? Do the “reformers” themselves need reform?

- Is it unscientific (ad hoc, degenerating) to use the same data both in constructing and testing hypotheses? When and why?

- Is it possible to test assumptions of statistical models without circularity?

- Is the new research on “replicability” well-founded, or an erroneous use of screening statistics for long-run performance?

- Should randomized studies be the “gold standard” for “evidence-based” science and policy?

- What’s the problem with big data: cherry-picking, data mining, multiple testing

- The many faces of Bayesian statistics: Can there be uninformative prior probabilities? (No) Principles of indifference over the years

- Statistical fraudbusting: psychology, economics, evidence-based policy

- Applied controversies (selected): Higgs experiments, climate modeling, social psychology, econometric modeling, development economic

D. Mayo (books):

How to Tell What’s True About Statistical Inference, (Cambridge, in progress).

Error and the Growth of Experimental Knowledge, Chicago: Chicago University Press, 1996. (Winner of 1998 Lakatos Prize).

Acceptable Evidence: Science and Values in Risk Management, co-edited with Rachelle Hollander, New York: Oxford University Press, 1994.

Aris Spanos (books):

Probability Theory and Statistical Inference, Cambridge, 1999.

Statistical Foundations of Econometric Modeling, Cambridge, 1986.

Joint (books): Error and Inference: Recent Exchanges on Experimental Reasoning, Reliability and the Objectivity and Rationality of Science, D. Mayo & A. Spanos (eds.), Cambridge: Cambridge University Press, 2010. [Intro, Background & Chapter 1. (The book includes both papers and exchanges between Mayo and A. Chalmers, A. Musgrave, P. Achinstein, J. Worrall, C. Glymour, A. Spanos, and joint papers with Mayo and Sir David Cox)].

“The many faces of Bayesian statistics: Can there be uninformative prior probabilities? (No) Principles of indifference over the years”

I am interested in your thoughts on this aspect. I have been thinking the “nil” null hypothesis is just the principle of indifference sneaking back in.

Grapling: I don’t see how, it isn’t a prior.

Principle of indifference: All possibilities are equal if nothing else is known

Nil Null Hypothesis: All groups are equal if nothing else is known.

Is there any chance of having lecture notes posted online? Traveling and Skype are both out for me, but I’d be interested in following along casually through the notes (and I’m sure I’m not the only one).

Justin: Yes, we will make the notes available and as much of the discussion as possible.