Yesterday was statistician George Barnard’s 105th birthday. To acknowledge it, I reblog an exchange between Barnard, Savage (and others) on likelihood vs probability. The exchange is from pp 79-84 (of what I call) “The Savage Forum” (Savage, 1962).[i] A portion appears on p. 420 of my Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (2018, CUP). Six other posts on Barnard are linked below, including 2 guest posts, (Senn, Spanos); a play (pertaining to our first meeting), and a letter Barnard wrote to me in 1999. Continue reading

Barnard

G.A. Barnard: The “catch-all” factor: probability vs likelihood

With continued acknowledgement of Barnard’s birthday on Friday, Sept.23, I reblog an exchange on catchall probabilities from the “The Savage Forum” (pp 79-84 Savage, 1962) with some new remarks.[i]

BARNARD:…Professor Savage, as I understand him, said earlier that a difference between likelihoods and probabilities was that probabilities would normalize because they integrate to one, whereas likelihoods will not. Now probabilities integrate to one only if all possibilities are taken into account. This requires in its application to the probability of hypotheses that we should be in a position to enumerate all possible hypotheses which might explain a given set of data. Now I think it is just not true that we ever can enumerate all possible hypotheses. … If this is so we ought to allow that in addition to the hypotheses that we really consider we should allow something that we had not thought of yet, and of course as soon as we do this we lose the normalizing factor of the probability, and from that point of view probability has no advantage over likelihood. This is my general point, that I think while I agree with a lot of the technical points, I would prefer that this is talked about in terms of likelihood rather than probability. I should like to ask what Professor Savage thinks about that, whether he thinks that the necessity to enumerate hypotheses exhaustively, is important. Continue reading →

G.A. Barnard’s 101st Birthday: The Bayesian “catch-all” factor: probability vs likelihood

Today is George Barnard’s 101st birthday. In honor of this, I reblog an exchange between Barnard, Savage (and others) on likelihood vs probability. The exchange is from pp 79-84 (of what I call) “The Savage Forum” (Savage, 1962).[i] Six other posts on Barnard are linked below: 2 are guest posts (Senn, Spanos); the other 4 include a play (pertaining to our first meeting), and a letter he wrote to me.

♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠

BARNARD:…Professor Savage, as I understand him, said earlier that a difference between likelihoods and probabilities was that probabilities would normalize because they integrate to one, whereas likelihoods will not. Now probabilities integrate to one only if all possibilities are taken into account. This requires in its application to the probability of hypotheses that we should be in a position to enumerate all possible hypotheses which might explain a given set of data. Now I think it is just not true that we ever can enumerate all possible hypotheses. … If this is so we ought to allow that in addition to the hypotheses that we really consider we should allow something that we had not thought of yet, and of course as soon as we do this we lose the normalizing factor of the probability, and from that point of view probability has no advantage over likelihood. This is my general point, that I think while I agree with a lot of the technical points, I would prefer that this is talked about in terms of likelihood rather than probability. I should like to ask what Professor Savage thinks about that, whether he thinks that the necessity to enumerate hypotheses exhaustively, is important. Continue reading →

G.A. Barnard: The “catch-all” factor: probability vs likelihood

From the “The Savage Forum” (pp 79-84 Savage, 1962)[i]

BARNARD:…Professor Savage, as I understand him, said earlier that a difference between likelihoods and probabilities was that probabilities would normalize because they integrate to one, whereas likelihoods will not. Now probabilities integrate to one only if all possibilities are taken into account. This requires in its application to the probability of hypotheses that we should be in a position to enumerate all possible hypotheses which might explain a given set of data. Now I think it is just not true that we ever can enumerate all possible hypotheses. … If this is so we ought to allow that in addition to the hypotheses that we really consider we should allow something that we had not thought of yet, and of course as soon as we do this we lose the normalizing factor of the probability, and from that point of view probability has no advantage over likelihood. This is my general point, that I think while I agree with a lot of the technical points, I would prefer that this is talked about in terms of likelihood rather than probability. I should like to ask what Professor Savage thinks about that, whether he thinks that the necessity to enumerate hypotheses exhaustively, is important.

SAVAGE: Surely, as you say, we cannot always enumerate hypotheses so completely as we like to think. The list can, however, always be completed by tacking on a catch-all ‘something else’. In principle, a person will have probabilities given ‘something else’ just as he has probabilities given other hypotheses. In practice, the probability of a specified datum given ‘something else’ is likely to be particularly vague–an unpleasant reality. The probability of ‘something else’ is also meaningful of course, and usually, though perhaps poorly defined, it is definitely very small. Looking at things this way, I do not find probabilities unnormalizable, certainly not altogether unnormalizable. Continue reading →

George Barnard: 100th birthday: “We need more complexity” (and coherence) in statistical education

The answer to the question of my last post is George Barnard, and today is his 100th birthday*. The paragraphs stem from a 1981 conference in honor of his 65th birthday, published in his 1985 monograph: “A Coherent View of Statistical Inference” (Statistics, Technical Report Series, University of Waterloo). Happy Birthday George!

[I]t seems to be useful for statisticians generally to engage in retrospection at this time, because there seems now to exist an opportunity for a convergence of view on the central core of our subject. Unless such an opportunity is taken there is a danger that the powerful central stream of development of our subject may break up into smaller and smaller rivulets which may run away and disappear into the sand.

I shall be concerned with the foundations of the subject. But in case it should be thought that this means I am not here strongly concerned with practical applications, let me say right away that confusion about the foundations of the subject is responsible, in my opinion, for much of the misuse of the statistics that one meets in fields of application such as medicine, psychology, sociology, economics, and so forth. It is also responsible for the lack of use of sound statistics in the more developed areas of science and engineering. While the foundations have an interest of their own, and can, in a limited way, serve as a basis for extending statistical methods to new problems, their study is primarily justified by the need to present a coherent view of the subject when teaching it to others. One of the points I shall try to make is, that we have created difficulties for ourselves by trying to oversimplify the subject for presentation to others. It would surely have been astonishing if all the complexities of such a subtle concept as probability in its application to scientific inference could be represented in terms of only three concepts––estimates, confidence intervals, and tests of hypotheses. Yet one would get the impression that this was possible from many textbooks purporting to expound the subject. We need more complexity; and this should win us greater recognition from scientists in developed areas, who already appreciate that inference is a complex business while at the same time it should deter those working in less developed areas from thinking that all they need is a suite of computer programs.

Statistical Theater of the Absurd: “Stat on a Hot Tin Roof”

Memory lane: Did you ever consider how some of the colorful exchanges among better-known names in statistical foundations could be the basis for high literary drama in the form of one-act plays (even if appreciated by only 3-7 people in the world)? (Think of the expressionist exchange between Bohr and Heisenberg in Michael Frayn’s play Copenhagen, except here there would be no attempt at all to popularize—only published quotes and closely remembered conversations would be included, with no attempt to create a “story line”.) Somehow I didn’t think so. But rereading some of Savage’s high-flown praise of Birnbaum’s “breakthrough” argument (for the Likelihood Principle) today, I was swept into a “(statistical) theater of the absurd” mindset.(Update Aug, 2015 [ii])

The first one came to me in autumn 2008 while I was giving a series of seminars on philosophy of statistics at the LSE. Modeled on a disappointing (to me) performance of The Woman in Black, “A Funny Thing Happened at the [1959] Savage Forum” relates Savage’s horror at George Barnard’s announcement of having rejected the Likelihood Principle!

The current piece also features George Barnard. It recalls our first meeting in London in 1986. I’d sent him a draft of my paper on E.S. Pearson’s statistical philosophy, “Why Pearson Rejected the Neyman-Pearson Theory of Statistics” (later adapted as chapter 11 of EGEK) to see whether I’d gotten Pearson right. Since Tuesday (Aug 11) is Pearson’s birthday, I’m reblogging this. Barnard had traveled quite a ways, from Colchester, I think. It was June and hot, and we were up on some kind of a semi-enclosed rooftop. Barnard was sitting across from me looking rather bemused.

The curtain opens with Barnard and Mayo on the roof, lit by a spot mid-stage. He’s drinking (hot) tea; she, a Diet Coke. The dialogue (is what I recall from the time[i]):

Barnard: I read your paper. I think it is quite good. Did you know that it was I who told Fisher that Neyman-Pearson statistics had turned his significance tests into little more than acceptance procedures? Continue reading →

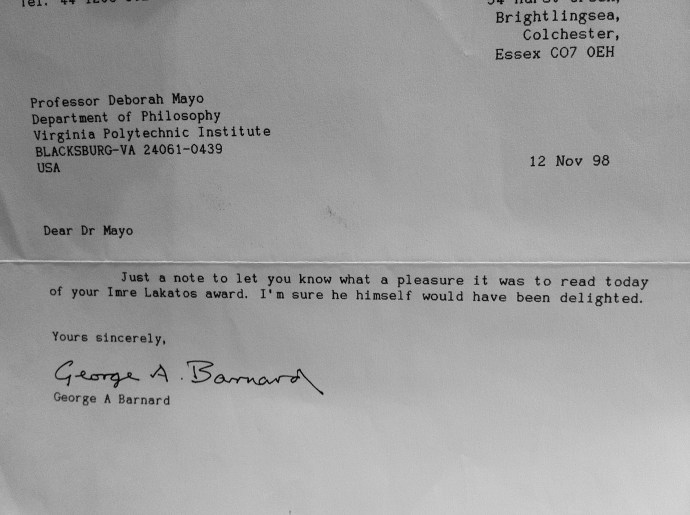

Letter from George (Barnard)

Memory Lane: (2 yrs) Sept. 30, 2012. George Barnard sent me this note on hearing of my Lakatos Prize. He was to have been at my Lakatos dinner at the LSE (March 17, 1999)—winners are permitted to invite ~2-3 guests*—but he called me at the LSE at the last minute to say he was too ill to come to London. Instead we had a long talk on the phone the next day, which I can discuss at some point. The main topics were likelihoods and error probabilities. {I have 3 other Barnard letters, with real content, that I might dig out some time.)

Memory Lane: (2 yrs) Sept. 30, 2012. George Barnard sent me this note on hearing of my Lakatos Prize. He was to have been at my Lakatos dinner at the LSE (March 17, 1999)—winners are permitted to invite ~2-3 guests*—but he called me at the LSE at the last minute to say he was too ill to come to London. Instead we had a long talk on the phone the next day, which I can discuss at some point. The main topics were likelihoods and error probabilities. {I have 3 other Barnard letters, with real content, that I might dig out some time.)

*My others were Donald Gillies and Hasok Chang

G.A. Barnard: The Bayesian “catch-all” factor: probability vs likelihood

Today is George Barnard’s birthday. In honor of this, I have typed in an exchange between Barnard, Savage (and others) on an important issue that we’d never gotten around to discussing explicitly (on likelihood vs probability). Please share your thoughts.

The exchange is from pp 79-84 (of what I call) “The Savage Forum” (Savage, 1962)[i]

♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠♠

BARNARD:…Professor Savage, as I understand him, said earlier that a difference between likelihoods and probabilities was that probabilities would normalize because they integrate to one, whereas likelihoods will not. Now probabilities integrate to one only if all possibilities are taken into account. This requires in its application to the probability of hypotheses that we should be in a position to enumerate all possible hypotheses which might explain a given set of data. Now I think it is just not true that we ever can enumerate all possible hypotheses. … If this is so we ought to allow that in addition to the hypotheses that we really consider we should allow something that we had not thought of yet, and of course as soon as we do this we lose the normalizing factor of the probability, and from that point of view probability has no advantage over likelihood. This is my general point, that I think while I agree with a lot of the technical points, I would prefer that this is talked about in terms of likelihood rather than probability. I should like to ask what Professor Savage thinks about that, whether he thinks that the necessity to enumerate hypotheses exhaustively, is important.

SAVAGE: Surely, as you say, we cannot always enumerate hypotheses so completely as we like to think. The list can, however, always be completed by tacking on a catch-all ‘something else’. In principle, a person will have probabilities given ‘something else’ just as he has probabilities given other hypotheses. In practice, the probability of a specified datum given ‘something else’ is likely to be particularly vague–an unpleasant reality. The probability of ‘something else’ is also meaningful of course, and usually, though perhaps poorly defined, it is definitely very small. Looking at things this way, I do not find probabilities unnormalizable, certainly not altogether unnormalizable.

Whether probability has an advantage over likelihood seems to me like the question whether volts have an advantage over amperes. The meaninglessness of a norm for likelihood is for me a symptom of the great difference between likelihood and probability. Since you question that symptom, I shall mention one or two others. …

On the more general aspect of the enumeration of all possible hypotheses, I certainly agree that the danger of losing serendipity by binding oneself to an over-rigid model is one against which we cannot be too alert. We must not pretend to have enumerated all the hypotheses in some simple and artificial enumeration that actually excludes some of them. The list can however be completed, as I have said, by adding a general ‘something else’ hypothesis, and this will be quite workable, provided you can tell yourself in good faith that ‘something else’ is rather improbable. The ‘something else’ hypothesis does not seem to make it any more meaningful to use likelihood for probability than to use volts for amperes.

Let us consider an example. Off hand, one might think it quite an acceptable scientific question to ask, ‘What is the melting point of californium?’ Such a question is, in effect, a list of alternatives that pretends to be exhaustive. But, even specifying which isotope of californium is referred to and the pressure at which the melting point is wanted, there are alternatives that the question tends to hide. It is possible that californium sublimates without melting or that it behaves like glass. Who dare say what other alternatives might obtain? An attempt to measure the melting point of californium might, if we are serendipitous, lead to more or less evidence that the concept of melting point is not directly applicable to it. Whether this happens or not, Bayes’s theorem will yield a posterior probability distribution for the melting point given that there really is one, based on the corresponding prior conditional probability and on the likelihood of the observed reading of the thermometer as a function of each possible melting point. Neither the prior probability that there is no melting point, nor the likelihood for the observed reading as a function of hypotheses alternative to that of the existence of a melting point enter the calculation. The distinction between likelihood and probability seems clear in this problem, as in any other.

BARNARD: Professor Savage says in effect, ‘add at the bottom of list H1, H2,…”something else”’. But what is the probability that a penny comes up heads given the hypothesis ‘something else’. We do not know. What one requires for this purpose is not just that there should be some hypotheses, but that they should enable you to compute probabilities for the data, and that requires very well defined hypotheses. For the purpose of applications, I do not think it is enough to consider only the conditional posterior distributions mentioned by Professor Savage. Continue reading →

Statistical Theater of the Absurd: “Stat on a Hot Tin Roof”

Memory lane: Did you ever consider how some of the colorful exchanges among better-known names in statistical foundations could be the basis for high literary drama in the form of one-act plays (even if appreciated by only 3-7 people in the world)? (Think of the expressionist exchange between Bohr and Heisenberg in Michael Frayn’s play Copenhagen, except here there would be no attempt at all to popularize—only published quotes and closely remembered conversations would be included, with no attempt to create a “story line”.) Somehow I didn’t think so. But rereading some of Savage’s high-flown praise of Birnbaum’s “breakthrough” argument (for the Likelihood Principle) today, I was swept into a “(statistical) theater of the absurd” mindset.

Memory lane: Did you ever consider how some of the colorful exchanges among better-known names in statistical foundations could be the basis for high literary drama in the form of one-act plays (even if appreciated by only 3-7 people in the world)? (Think of the expressionist exchange between Bohr and Heisenberg in Michael Frayn’s play Copenhagen, except here there would be no attempt at all to popularize—only published quotes and closely remembered conversations would be included, with no attempt to create a “story line”.) Somehow I didn’t think so. But rereading some of Savage’s high-flown praise of Birnbaum’s “breakthrough” argument (for the Likelihood Principle) today, I was swept into a “(statistical) theater of the absurd” mindset.

The first one came to me in autumn 2008 while I was giving a series of seminars on philosophy of statistics at the LSE. Modeled on a disappointing (to me) performance of The Woman in Black, “A Funny Thing Happened at the [1959] Savage Forum” relates Savage’s horror at George Barnard’s announcement of having rejected the Likelihood Principle!

The current piece also features George Barnard and since Monday (9/23) is Barnard’s birthday, I’m digging it out of “rejected posts” to reblog it. It recalls our first meeting in London in 1986. I’d sent him a draft of my paper “Why Pearson Rejected the Neyman-Pearson Theory of Statistics” (later adapted as chapter 11 of EGEK) to see whether I’d gotten Pearson right. He’d traveled quite a ways, from Colchester, I think. It was June and hot, and we were up on some kind of a semi-enclosed rooftop. Barnard was sitting across from me looking rather bemused.

The curtain opens with Barnard and Mayo on the roof, lit by a spot mid-stage. He’s drinking (hot) tea; she, a Diet Coke. The dialogue (is what I recall from the time[i]):

Barnard: I read your paper. I think it is quite good. Did you know that it was I who told Fisher that Neyman-Pearson statistics had turned his significance tests into little more than acceptance procedures?

Mayo: Thank you so much for reading my paper. I recall a reference to you in Pearson’s response to Fisher, but I didn’t know the full extent.

Barnard: I was the one who told Fisher that Neyman was largely to blame. He shouldn’t be too hard on Egon. His statistical philosophy, you are aware, was different from Neyman’s.

Mayo: That’s interesting. I did quote Pearson, at the end of his response to Fisher, as saying that inductive behavior was “Neyman’s field, not mine”. I didn’t know your role in his laying the blame on Neyman!

Fade to black. The lights go up on Fisher, stage left, flashing back some 30 years earlier . . . ….

Fisher: Now, acceptance procedures are of great importance in the modern world. When a large concern like the Royal Navy receives material from an engineering firm it is, I suppose, subjected to sufficiently careful inspection and testing to reduce the frequency of the acceptance of faulty or defective consignments. . . . I am casting no contempt on acceptance procedures, and I am thankful, whenever I travel by air, that the high level of precision and reliability required can really be achieved by such means. But the logical differences between such an operation and the work of scientific discovery by physical or biological experimentation seem to me so wide that the analogy between them is not helpful . . . . [Advocates of behavioristic statistics are like]

Russians [who] are made familiar with the ideal that research in pure science can and should be geared to technological performance, in the comprehensive organized effort of a five-year plan for the nation. . . .

In the U.S. also the great importance of organized technology has I think made it easy to confuse the process appropriate for drawing correct conclusions, with those aimed rather at, let us say, speeding production, or saving money. (Fisher 1955, 69-70)

Fade to black. The lights go up on Egon Pearson stage right (who looks like he does in my sketch [frontispiece] from EGEK 1996, a bit like a young C. S. Peirce):

Pearson: There was no sudden descent upon British soil of Russian ideas regarding the function of science in relation to technology and to five-year plans. . . . Indeed, to dispel the picture of the Russian technological bogey, I might recall how certain early ideas came into my head as I sat on a gate overlooking an experimental blackcurrant plot . . . . To the best of my ability I was searching for a way of expressing in mathematical terms what appeared to me to be the requirements of the scientist in applying statistical tests to his data. (Pearson 1955, 204)

Fade to black. The spotlight returns to Barnard and Mayo, but brighter. It looks as if it’s gotten hotter. Barnard wipes his brow with a white handkerchief. Mayo drinks her Diet Coke.

Barnard (ever so slightly angry): You have made one blunder in your paper. Fisher would never have made that remark about Russia.

There is a tense silence.

Mayo: But—it was a quote.

End of Act 1.

Given this was pre-internet, we couldn’t go to the source then and there, so we agreed to search for the paper in the library. Well, you get the idea. Maybe I could call the piece “Stat on a Hot Tin Roof.”

If you go see it, don’t say I didn’t warn you.

I’ve gotten various new speculations over the years as to why he had this reaction to the mention of Russia (check discussions in earlier posts with this play). Feel free to share yours. Some new (to me) information on Barnard is in George Box’s recent autobiography.

[i] We had also discussed this many years later, in 1999.

Statistical Theater of the Absurd: “Stat on a Hot Tin Roof”

Memory lane: Did you ever consider how some of the colorful exchanges among better-known names in statistical foundations could be the basis for high literary drama in the form of one-act plays (even if appreciated by only 3-7 people in the world)? (Think of the expressionist exchange between Bohr and Heisenberg in Michael Frayn’s play Copenhagen, except here there would be no attempt at all to popularize—only published quotes and closely remembered conversations would be included, with no attempt to create a “story line”.) Somehow I didn’t think so. But rereading some of Savage’s high-flown praise of Birnbaum’s “breakthrough” argument (for the Likelihood Principle) today, I was swept into a “(statistical) theater of the absurd” mindset.

The first one came to me in autumn 2008 while I was giving a series of seminars on philosophy of statistics at the LSE. Modeled on a disappointing (to me) performance of The Woman in Black, “A Funny Thing Happened at the [1959] Savage Forum” relates Savage’s horror at George Barnard’s announcement of having rejected the Likelihood Principle!

The current piece taking shape also features George Barnard and since tomorrow (9/23) is his birthday, I’m digging it out of “rejected posts”. It recalls our first meeting in London in 1986. I’d sent him a draft of my paper “Why Pearson Rejected the Neyman-Pearson Theory of Statistics” (later adapted as chapter 11 of EGEK) to see whether I’d gotten Pearson right. He’d traveled quite a ways, from Colchester, I think. It was June and hot, and we were up on some kind of a semi-enclosed rooftop. Barnard was sitting across from me looking rather bemused.

The curtain opens with Barnard and Mayo on the roof, lit by a spot mid-stage. He’s drinking (hot) tea; she, a Diet Coke. The dialogue (is what I recall from the time[i]):

Barnard: I read your paper. I think it is quite good. Did you know that it was I who told Fisher that Neyman-Pearson statistics had turned his significance tests into little more than acceptance procedures?

Mayo: Thank you so much for reading my paper. I recall a reference to you in Pearson’s response to Fisher, but I didn’t know the full extent.

Barnard: I was the one who told Fisher that Neyman was largely to blame. He shouldn’t be too hard on Egon. His statistical philosophy, you are aware, was different from Neyman’s. Continue reading →

The Statistics Wars & Their Casualties

Blog links (references)

Reviews of Statistical Inference as Severe Testing (SIST)

- C. Hennig (2019) Statistical Modeling, Causal. Inference, and Social Science blog

- A. Spanos (2019) OEconomia: History, Methodology, Philosophy

- R. Cousins 2020 (Preprint)

- S. Fletcher (2020) Philosophy of Science

- B. Haig (2020) Methods in Psychology

- C. Mayo-Wilson (2020 forthcoming) Philosophical Review

- T. Sterkenburg (2020) Journal for General Philosophy of Science

- P. Bandyopadhyay (2019) Notre Dame Philosophical Reviews

Interviews & Debates on PhilStat (2020)

- The Statistics Debate!with Jim Berger, Deborah Mayo, David Trafimow & Dan Jeske, moderator (10/15/20)

- The Filter podcast with Matt Asher (11/23/20)

- Philosophy of Data Science Series Keynote Episode 1: Revolutions, Reforms, and Severe Testing in Data Science with Glen Wright Colopy (11/24/20)

- Philosophy of Data Science Series Keynote Episode 2: The Philosophy of Science & Statistics with Glen Wright Colopy (12/01/20)

Interviews on PhilStat (2019)

Top Posts & Pages

- Aris Spanos Guest Post: "On Frequentist Testing: revisiting widely held confusions and misinterpretations"

- Little Bit of Logic (5 mini problems for the reader)

- 5-year review: The NEJM Issues New Guidelines on Statistical Reporting: Is the ASA P-Value Project Backfiring? (i)

- My BJPS paper: Severe Testing: Error Statistics versus Bayes Factor Tests

- 2023 Phil 6014 Syllabus

- The NEJM Issues New Guidelines on Statistical Reporting: Is the ASA P-Value Project Backfiring? (i)

- Some ironies in the 'replication crisis' in social psychology (4th and final installment)

- Blog Bagel

- 2023 Captain's Biblio

- The Myth of 'The Myth of Objectivity" (i)

Conferences & Workshops

RMM Special Topic

Mayo & Spanos, Error Statistics

My Websites

Recent Posts: PhilStatWars

The Statistics Wars and Their Casualties Videos & Slides from Sessions 1 & 2

THE STATISTICS WARS AND THEIR CASUALTIES VIDEOS & SLIDES FROM SESSIONS 3 & 4

Final session: The Statistics Wars and Their Casualties: 8 December, Session 4

SCHEDULE: The Statistics Wars and Their Casualties: 1 Dec & 8 Dec: Sessions 3 & 4

WORKSHOP

LOG IN/OUT

Archives

- April 2026

- March 2026

- February 2026

- January 2026

- December 2025

- November 2025

- October 2025

- September 2025

- August 2025

- July 2025

- June 2025

- May 2025

- April 2025

- March 2025

- February 2025

- January 2025

- December 2024

- November 2024

- October 2024

- September 2024

- August 2024

- July 2024

- June 2024

- May 2024

- April 2024

- March 2024

- February 2024

- January 2024

- December 2023

- October 2023

- September 2023

- August 2023

- July 2023

- June 2023

- May 2023

- April 2023

- March 2023

- February 2023

- January 2023

- December 2022

- November 2022

- October 2022

- September 2022

- August 2022

- July 2022

- June 2022

- May 2022

- April 2022

- March 2022

- February 2022

- January 2022

- December 2021

- November 2021

- October 2021

- September 2021

- August 2021

- July 2021

- June 2021

- May 2021

- April 2021

- March 2021

- February 2021

- January 2021

- December 2020

- November 2020

- October 2020

- September 2020

- August 2020

- July 2020

- June 2020

- May 2020

- April 2020

- March 2020

- February 2020

- January 2020

- December 2019

- November 2019

- October 2019

- September 2019

- August 2019

- July 2019

- June 2019

- May 2019

- April 2019

- March 2019

- February 2019

- January 2019

- December 2018

- November 2018

- October 2018

- September 2018

- August 2018

- July 2018

- June 2018

- May 2018

- April 2018

- March 2018

- February 2018

- January 2018

- December 2017

- November 2017

- October 2017

- September 2017

- August 2017

- July 2017

- June 2017

- May 2017

- April 2017

- March 2017

- February 2017

- January 2017

- December 2016

- November 2016

- October 2016

- September 2016

- August 2016

- July 2016

- June 2016

- May 2016

- April 2016

- March 2016

- February 2016

- January 2016

- December 2015

- November 2015

- October 2015

- September 2015

- August 2015

- July 2015

- June 2015

- May 2015

- April 2015

- March 2015

- February 2015

- January 2015

- December 2014

- November 2014

- October 2014

- September 2014

- August 2014

- July 2014

- June 2014

- May 2014

- April 2014

- March 2014

- February 2014

- January 2014

- December 2013

- November 2013

- October 2013

- September 2013

- August 2013

- July 2013

- June 2013

- May 2013

- April 2013

- March 2013

- February 2013

- January 2013

- December 2012

- November 2012

- October 2012

- September 2012

- August 2012

- July 2012

- June 2012

- May 2012

- April 2012

- March 2012

- February 2012

- January 2012

- December 2011

- November 2011

- October 2011

- September 2011