Third Stop

Readers: With this third stop we’ve covered Tour 1 of Excursion 1. My slides from the first LSE meeting in 2020 which dealt with elements of Excursion 1 can be found at the end of this post. There’s also a video giving an overall intro to SIST, Excursion 1. It’s noteworthy to consider just how much things seem to have changed in just the past few years. Or have they? What would the view from the hot-air balloon look like now? Share your thoughts in the comments.

ZOOM: I propose a zoom meeting for

Sunday Nov. 15, Sunday, November 16 at 11 am or Friday, November 21 at 11am, New York time. (An equal # prefer Fri & Sun.) The link will be available to those who register/registered with Dr. Miller*.

The Current State of Play in Statistical Foundations: A View From a Hot-Air Balloon (1.3)

How can a discipline, central to science and to critical thinking, have two methodologies, two logics, two approaches that frequently give substantively different answers to the same problems? … Is complacency in the face of contradiction acceptable for a central discipline of science? (Donald Fraser 2011, p. 329)

We [statisticians] are not blameless … we have not made a concerted professional effort to provide the scientific world with a unified testing methodology. (J. Berger 2003, p. 4)

A seminal controversy in statistical inference is whether error probabilities associated with an inference method are evidentially relevant once the data are in hand. Frequentist error statisticians say yes; Bayesians say no. A “no” answer goes hand in hand with holding the Likelihood Principle (LP), which follows from inference by Bayes theorem. A “yes” answer violates the LP (also called the strong LP). The reason error probabilities drop out according to the LP is that it follows from the LP that all the evidence from the data is contained in the likelihood ratios (at least for inference within a statistical model). For the error statistician, likelihood ratios are merely measures of comparative fit, and omit crucial information about their reliability. A dramatic illustration of this disagreement involves optional stopping, and it’s the one to which Roderick Little turns in the chapter “Do you like the likelihood principle?” in

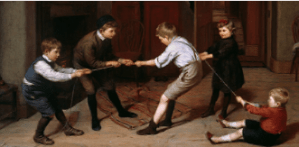

A seminal controversy in statistical inference is whether error probabilities associated with an inference method are evidentially relevant once the data are in hand. Frequentist error statisticians say yes; Bayesians say no. A “no” answer goes hand in hand with holding the Likelihood Principle (LP), which follows from inference by Bayes theorem. A “yes” answer violates the LP (also called the strong LP). The reason error probabilities drop out according to the LP is that it follows from the LP that all the evidence from the data is contained in the likelihood ratios (at least for inference within a statistical model). For the error statistician, likelihood ratios are merely measures of comparative fit, and omit crucial information about their reliability. A dramatic illustration of this disagreement involves optional stopping, and it’s the one to which Roderick Little turns in the chapter “Do you like the likelihood principle?” in  Around a year ago, Professor Rod Little asked me if I’d mind being on the cover of a book he was finishing along with Fisher, Neyman and some others (can you identify the others?). Mind? The book is

Around a year ago, Professor Rod Little asked me if I’d mind being on the cover of a book he was finishing along with Fisher, Neyman and some others (can you identify the others?). Mind? The book is