Yes, my April 1 post was an April fool’s post, written entirely, and surprisingly, by ChatGPT who was in on the gag. This post is not, although it concerns another kind of “leak”. It’s a reblog of a post. from 4 years ago about “the mysteries of the mine” which captivated me during the pandemic. I was reminded of the saga when I came across a New York Times article last month co-written by Ralph Baric. Baric, the mastermind of an important reverse engineering technique to modify the capacity of viruses to infect humans, is now warning us that “Virus Research Should raise the Alarm”. What alarms him is that the same kind of bat virus research, by the same people, at the same Wuhan lab, is still being conducted at inadequate (BSL-2) safety levels. But let’s go back to a mysterious event in an abandoned mine in China in 2012.

*************************************************************** Continue reading

science communication

4 years ago: Falsifying claims of trust in bat coronavirus research: mysteries of the mine (i)-(iv)

Falsifying claims of trust in bat coronavirus research: mysteries of the mine (i)-(iv)

Have you ever wondered if people read Master’s (or even Ph.D) theses a decade out? Whether or not you have, I think you will be intrigued to learn the story of why an obscure Master’s thesis from 2012, translated from Chinese in 2020, is now an integral key for unravelling the puzzle of the global controversy about the mechanism and origins of Covid-19. The Master’s thesis by a doctor, Li Xu [1], “The Analysis of 6 Patients with Severe Pneumonia Caused by Unknown Viruses”, describes 6 patients he helped to treat after they entered a hospital in 2012, one after the other, suffering from an atypical pneumonia from cleaning up after bats in an abandoned copper mine in China. Given the keen interest in finding the origin of the 2002–2003 severe acute respiratory syndrome (SARS) outbreak, Li wrote: “This makes the research of the bats in the mine where the six miners worked and later suffered from severe pneumonia caused by unknown virus a significant research topic”. He and the other doctors treating the mine cleaners hypothesized that their diseases were caused by a SARS-like coronavirus from having been in close proximity to the bats in the mine. Continue reading

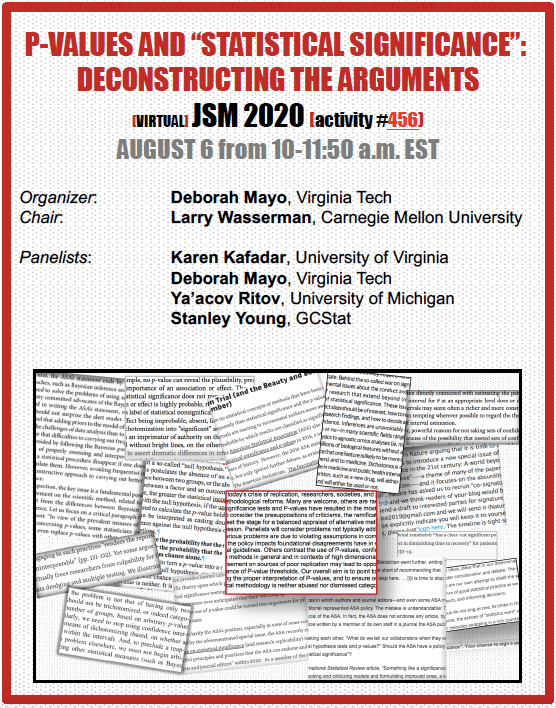

August 6: JSM 2020 Panel on P-values & “Statistical Significance”

July 30 PRACTICE VIDEO for JSM talk (All materials for Practice JSM session here)

JSM 2020 Panel Flyer (PDF)

JSM online program w/panel abstract & information):

A small amendment to Nuzzo’s tips for communicating p-values

I’ve been asked if I agree with Regina Nuzzo’s recent note on p-values [i]. I don’t want to be nit-picky, but one very small addition to Nuzzo’s helpful tips for communicating statistical significance can make it a great deal more helpful. Here’s my friendly amendment. She writes: Continue reading

The ASA Document on P-Values: One Year On

I’m surprised it’s a year already since posting my published comments on the ASA Document on P-Values. Since then, there have been a slew of papers rehearsing the well-worn fallacies of tests (a tad bit more than the usual rate). Doubtless, the P-value Pow Wow raised people’s consciousnesses. I’m interested in hearing reader reactions/experiences in connection with the P-Value project (positive and negative) over the past year. (Use the comments, share links to papers; and/or send me something slightly longer for a possible guest post.)

Some people sent me a diagram from a talk by Stephen Senn (on “P-values and the art of herding cats”). He presents an array of different cat commentators, and for some reason Mayo cat is in the middle but way over on the left side,near the wall. I never got the key to interpretation. My contribution is below:

“Don’t Throw Out The Error Control Baby With the Bad Statistics Bathwater”

D. Mayo*[1]

The American Statistical Association is to be credited with opening up a discussion into p-values; now an examination of the foundations of other key statistical concepts is needed. Continue reading

“So you banned p-values, how’s that working out for you?” D. Lakens exposes the consequences of a puzzling “ban” on statistical inference

I came across an excellent post on a blog kept by Daniel Lakens: “So you banned p-values, how’s that working out for you?” He refers to the journal that recently banned significance tests, confidence intervals, and a vague assortment of other statistical methods, on the grounds that all such statistical inference tools are “invalid” since they don’t provide posterior probabilities of some sort (see my post). The editors’ charge of “invalidity” could only hold water if these error statistical methods purport to provide posteriors based on priors, which is false. The entire methodology is based on methods in which probabilities arise to qualify the method’s capabilities to detect and avoid erroneous interpretations of data [0]. The logic is of the falsification variety found throughout science. Lakens, an experimental psychologist, does a great job delineating some of the untoward consequences of their inferential ban. I insert some remarks in black. Continue reading

Don’t throw out the error control baby with the bad statistics bathwater

My invited comments on the ASA Document on P-values*

The American Statistical Association is to be credited with opening up a discussion into p-values; now an examination of the foundations of other key statistical concepts is needed.

Statistical significance tests are a small part of a rich set of “techniques for systematically appraising and bounding the probabilities (under respective hypotheses) of seriously misleading interpretations of data” (Birnbaum 1970, p. 1033). These may be called error statistical methods (or sampling theory). The error statistical methodology supplies what Birnbaum called the “one rock in a shifting scene” (ibid.) in statistical thinking and practice. Misinterpretations and abuses of tests, warned against by the very founders of the tools, shouldn’t be the basis for supplanting them with methods unable or less able to assess, control, and alert us to erroneous interpretations of data. Continue reading

“What does it say about our national commitment to research integrity?”

There’s an important guest editorial by Keith Baggerly and C.K. Gunsalus in today’s issue of the Cancer Letter: “Penalty Too Light” on the Duke U. (Potti/Nevins) cancer trial fraud*. Here are some excerpts.

publication date: Nov 13, 2015

What does it say about our national commitment to research integrity that the Department of Health and Human Services’ Office of Research Integrity has concluded that a five-year ban on federal research funding for one individual researcher is a sufficient response to a case involving millions of taxpayer dollars, completely fabricated data, and hundreds to thousands of patients in invasive clinical trials?

This week, ORI released a notice of “final action” in the case of Anil Potti, M.D. The ORI found that Dr. Potti engaged in several instances of research misconduct and banned him from receiving federal funding for five years.

(See my previous post.)

The principles involved are important and the facts complicated. This was not just a matter of research integrity. This was also a case involving direct patient care and millions of dollars in federal and other funding. The duration and extent of deception were extreme. The case catalyzed an Institute of Medicine review of genomics in clinical trials and attracted national media attention.

If there are no further conclusions coming from ORI and if there are no other investigations under way—despite the importance of the issues involved and the five years that have elapsed since research misconduct investigation began, we do not know—a strong argument can be made that neither justice nor the research community have been served by this outcome. Continue reading

Are scientists really ready for ‘retraction offsets’ to advance ‘aggregate reproducibility’? (let alone ‘precautionary withdrawals’)

Given recent evidence of the irreproducibility of a surprising number of published scientific findings, the White House’s Office of Science and Technology Policy (OSTP) sought ideas for “leveraging its role as a significant funder of scientific research to most effectively address the problem”, and announced funding for projects to “reset the self-corrective process of scientific inquiry”. (first noted in this post.)

I was sent some information this morning with a rather long description of the project that received the top government award thus far (and it’s in the millions). I haven’t had time to read the proposal*, which I’ll link to shortly, but for a clear and quick description, you can read the excerpt of an interview of the OSTP representative by the editor of the Newsletter for Innovation in Science Journals (Working Group), Jim Stein, who took the lead in writing the author check list for Nature.

Stein’s queries are in burgundy, OSTP’s are in blue. Occasional comments from me are in black, which I’ll update once I study the fine print of the proposal itself. Continue reading

2015 Saturday Night Brainstorming and Task Forces: (4th draft)

Saturday Night Brainstorming: The TFSI on NHST–part reblog from here and here, with a substantial 2015 update!

Each year leaders of the movement to “reform” statistical methodology in psychology, social science, and other areas of applied statistics get together around this time for a brainstorming session. They review the latest from the Task Force on Statistical Inference (TFSI), propose new regulations they would like to see adopted, not just by the APA publication manual any more, but all science journals! Since it’s Saturday night, let’s listen in on part of an (imaginary) brainstorming session of the New Reformers.

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

Frustrated that the TFSI has still not banned null hypothesis significance testing (NHST)–a fallacious version of statistical significance tests that dares to violate Fisher’s first rule: It’s illicit to move directly from statistical to substantive effects–the New Reformers have created, and very successfully published in, new meta-level research paradigms designed expressly to study (statistically!) a central question: have the carrots and sticks of reward and punishment been successful in decreasing the use of NHST, and promoting instead use of confidence intervals, power calculations, and meta-analysis of effect sizes? Or not?

Most recently, the group has helped successfully launch a variety of “replication and reproducibility projects”. Having discovered how much the reward structure encourages bad statistics and gaming the system, they have cleverly pushed to change the reward structure: Failed replications (from a group chosen by a crowd-sourced band of replicationistas ) would not be hidden in those dusty old file drawers, but would be guaranteed to be published without that long, drawn out process of peer review. Do these failed replications indicate the original study was a false positive? or that the replication attempt is a false negative? It’s hard to say.

This year, as is typical, there is a new member who is pitching in to contribute what he hopes are novel ideas for reforming statistical practice. In addition, for the first time, there is a science reporter blogging the meeting for her next free lance “bad statistics” piece for a high impact science journal. Notice, it seems this committee only grows, no one has dropped off, in the 3 years I’ve followed them.

^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

Pawl: This meeting will come to order. I am pleased to welcome our new member, Dr. Ian Nydes, adding to the medical strength we have recently built with epidemiologist S.C.. In addition, we have a science writer with us today, Jenina Oozo. To familiarize everyone, we begin with a review of old business, and gradually turn to new business.

Franz: It’s so darn frustrating after all these years to see researchers still using NHST methods; some of the newer modeling techniques routinely build on numerous applications of those pesky tests.

Jake: And the premier publication outlets in the social sciences still haven’t mandated the severe reforms sorely needed. Hopefully the new blood, Dr. Ian Nydes, can help us go beyond resurrecting the failed attempts of the past. Continue reading

Significance Levels are Made a Whipping Boy on Climate Change Evidence: Is .05 Too Strict? (Schachtman on Oreskes)

Given the daily thrashing significance tests receive because of how preposterously easy it is claimed to satisfy the .05 significance level requirement, it’s surprising[i] to hear Naomi Oreskes blaming the .05 standard as demanding too high a burden of proof for accepting climate change. “Playing Dumb on Climate Change,” N.Y. Times Sunday Rev. at 2 (Jan. 4, 2015). Is there anything for which significance levels do not serve as convenient whipping boys? Thanks to lawyer Nathan Schachtman for alerting me to her opinion piece today (congratulations to Oreskes!),and to his current blogpost. I haven’t carefully read her article, but one claim jumped out: scientists, she says, “practice a form of self-denial, denying themselves the right to believe anything that has not passed very high intellectual hurdles.” If only! *I add a few remarks at the end. Anyhow here’s Schachtman’s post:

“Playing Dumb on Statistical Significance”

by Nathan Schachtman

Naomi Oreskes is a professor of the history of science in Harvard University. Her writings on the history of geology are well respected; her writings on climate change tend to be more adversarial, rhetorical, and ad hominem. See, e.g., Naomi Oreskes,Merchants of Doubt: How a Handful of Scientists Obscured the Truth on Issues from Tobacco Smoke to Global Warming(N.Y. 2010). Oreskes’ abuse of the meaning of significance probability for her own rhetorical ends is on display in today’s New York Times. Naomi Oreskes, “Playing Dumb on Climate Change,” N.Y. Times Sunday Rev. at 2 (Jan. 4, 2015).

Oreskes wants her readers to believe that those who are resisting her conclusions about climate change are hiding behind an unreasonably high burden of proof, which follows from the conventional standard of significance in significance probability. In presenting her argument, Oreskes consistently misrepresents the meaning of statistical significance and confidence intervals to be about the overall burden of proof for a scientific claim:

“Typically, scientists apply a 95 percent confidence limit, meaning that they will accept a causal claim only if they can show that the odds of the relationship’s occurring by chance are no more than one in 20. But it also means that if there’s more than even a scant 5 percent possibility that an event occurred by chance, scientists will reject the causal claim. It’s like not gambling in Las Vegas even though you had a nearly 95 percent chance of winning.”

Although the confidence interval is related to the pre-specified Type I error rate, alpha, and so a conventional alpha of 5% does lead to a coefficient of confidence of 95%, Oreskes has misstated the confidence interval to be a burden of proof consisting of a 95% posterior probability. The “relationship” is either true or not; the p-value or confidence interval provides a probability for the sample statistic, or one more extreme, on the assumption that the null hypothesis is correct. The 95% probability of confidence intervals derives from the long-term frequency that 95% of all confidence intervals, based upon samples of the same size, will contain the true parameter of interest.

Oreskes is an historian, but her history of statistical significance appears equally ill considered. Here is how she describes the “severe” standard of the 95% confidence interval: Continue reading

Msc. Kvetch: What does it mean for a battle to be “lost by the media”?

1. What does it mean for a debate to be “media driven” or a battle to be “lost by the media”? In my last post, I noted that until a few weeks ago, I’d never heard of a “power morcellator.” Nor had I heard of the AAGL–The American Association of Gynecologic Laparoscopists. In an article “Battle over morcellation lost ‘in the media’”(Nov 26, 2014) Susan London reports on a recent meeting of the AAGL[i]

1. What does it mean for a debate to be “media driven” or a battle to be “lost by the media”? In my last post, I noted that until a few weeks ago, I’d never heard of a “power morcellator.” Nor had I heard of the AAGL–The American Association of Gynecologic Laparoscopists. In an article “Battle over morcellation lost ‘in the media’”(Nov 26, 2014) Susan London reports on a recent meeting of the AAGL[i]

The media played a major role in determining the fate of uterine morcellation, suggested a study reported at a meeting sponsored by AAGL.

“How did we lose this battle of uterine morcellation? We lost it in the media,” asserted lead investigator Dr. Adrian C. Balica, director of the minimally invasive gynecologic surgery program at the Robert Wood Johnson Medical School in New Brunswick, N.J.

The “investigation” Balica led consisted of collecting Internet search data using something called the Google Adwords Keyword Planner:

Results showed that the average monthly number of Google searches for the term ‘morcellation’ held steady throughout most of 2013 at about 250 per month, reported Dr. Balica. There was, however, a sharp uptick in December 2013 to more than 2,000 per month, and the number continued to rise to a peak of about 18,000 per month in July 2014. A similar pattern was seen for the terms ‘morcellator,’ ‘fibroids in uterus,’ and ‘morcellation of uterine fibroid.’

The “vitals” of the study are summarized at the start of the article:

Key clinical point: Relevant Google searches rose sharply as the debate unfolded.

Major finding: The mean monthly number of searches for “morcellation” rose from about 250 in July 2013 to 18,000 in July 2014.

Data source: An analysis of Google searches for terms related to the power morcellator debate.

Disclosures: Dr. Balica disclosed that he had no relevant conflicts of interest.

2. Here’s my question: Does a high correlation between Google searches and debate-related terms signify that the debate is “media driven”? I suppose you could call it that, but Dr. Balica is clearly suggesting that something not quite kosher, or not fully factual was responsible for losing “this battle of uterine morcellation”, downplaying the substantial data and real events that drove people (like me) to search the terms upon hearing the FDA announcement in November. Continue reading

To Quarantine or not to Quarantine?: Science & Policy in the time of Ebola

Bioethicist Arthur Caplan gives “7 Reasons Ebola Quarantine Is a Bad, Bad Idea”. I’m interested to know what readers think (I claim no expertise in this area.) My occasional comments are in maroon.

“Bioethicist: 7 Reasons Ebola Quarantine Is a Bad, Bad Idea”

In the fight against Ebola some government officials in the U.S. are now managing fear, not the virus. Quarantines have been declared in New York, New Jersey and Illinois. In Connecticut, nine people are in quarantine: two students at Yale; a worker from AmeriCARES; and a West African family.

Many others are or soon will be.

Quarantining those who do not have symptoms is not the way to combat Ebola. In fact it will only make matters worse. Far worse. Why?

- Quarantining people without symptoms makes no scientific sense.

They are not infectious. The only way to get Ebola is to have someone vomit on you, bleed on you, share spit with you, have sex with you or get fecal matter on you when they have a high viral load.

How do we know this?

Because there is data going back to 1975 from outbreaks in the Congo, Uganda, Sudan, Gabon, Ivory Coast, South Africa, not to mention current experience in the United States, Spain and other nations.

The list of “the only way to get Ebola” does not suggest it is so extraordinarily difficult to transmit as to imply the policy “makes no scientific sense”. That there is “data going back to 1975” doesn’t tell us how it was analyzed. They may not be infectious today, but…

-

Quarantine is next to impossible to enforce.

If you don’t want to stay in your home or wherever you are supposed to stay for three weeks, then what? Do we shoot you, Taser you, drag you back into your house in a protective suit, or what?

And who is responsible for watching you 24-7? Quarantine relies on the honor system. That essentially is what we count on when we tell people with symptoms to call 911 or the health department.

It does appear that this hasn’t been well thought through yet. NY Governor Cuomo said that “Doctors Without Borders”, the group that sponsors many of the volunteers, already requires volunteers to “decompress” for three weeks upon return from Africa, and they compensate their doctors during this time (see the above link). The state of NY would fill in for those sponsoring groups that do not offer compensation (at least in NY). Is the existing 3 week decompression period already a clue that they want people cleared before they return to work? Continue reading

Some ironies in the ‘replication crisis’ in social psychology (4th and final installment)

There are some ironic twists in the way social psychology is dealing with its “replication crisis”, and they may well threaten even the most sincere efforts to put the field on firmer scientific footing–precisely in those areas that evoked the call for a “daisy chain” of replications. Two articles, one from the Guardian (June 14), and a second from The Chronicle of Higher Education (June 23) lay out the sources of what some are calling “Repligate”. The Guardian article is “Physics Envy: Do ‘hard’ sciences hold the solution to the replication crisis in psychology?”

There are some ironic twists in the way social psychology is dealing with its “replication crisis”, and they may well threaten even the most sincere efforts to put the field on firmer scientific footing–precisely in those areas that evoked the call for a “daisy chain” of replications. Two articles, one from the Guardian (June 14), and a second from The Chronicle of Higher Education (June 23) lay out the sources of what some are calling “Repligate”. The Guardian article is “Physics Envy: Do ‘hard’ sciences hold the solution to the replication crisis in psychology?”

The article in the Chronicle of Higher Education also gets credit for its title: “Replication Crisis in Psychology Research Turns Ugly and Odd”. I’ll likely write this in installments…(2nd, 3rd , 4th)

^^^^^^^^^^^^^^^

The Guardian article answers yes to the question “Do ‘hard’ sciences hold the solution…“:

Psychology is evolving faster than ever. For decades now, many areas in psychology have relied on what academics call “questionable research practices” – a comfortable euphemism for types of malpractice that distort science but which fall short of the blackest of frauds, fabricating data.

Continue reading

“The medical press must become irrelevant to publication of clinical trials.”

“The medical press must become irrelevant to publication of clinical trials.” So said Stephen Senn at a recent meeting of the Medical Journalists’ Association with the title: “Is the current system of publishing clinical trials fit for purpose?” Senn has thrown a few stones in the direction of medical journals in guest posts on this blog, and in this paper, but it’s the first I heard him go this far. He wasn’t the only one answering the conference question “No!” much to the surprise of medical journalist Jane Feinmann, whose article I am excerpting:

“The medical press must become irrelevant to publication of clinical trials.” So said Stephen Senn at a recent meeting of the Medical Journalists’ Association with the title: “Is the current system of publishing clinical trials fit for purpose?” Senn has thrown a few stones in the direction of medical journals in guest posts on this blog, and in this paper, but it’s the first I heard him go this far. He wasn’t the only one answering the conference question “No!” much to the surprise of medical journalist Jane Feinmann, whose article I am excerpting:

So what happened? Medical journals, the main vehicles for publishing clinical trials today, are after all the ‘gatekeepers of medical evidence’—as they are described in Bad Pharma, Ben Goldacre’s 2012 bestseller. …

… The Alltrials campaign, launched two years ago on the back of Goldacre’s book, has attracted an extraordinary level of support. … Continue reading

What have we learned from the Anil Potti training and test data fireworks ? Part 1 (draft 2)

Over 100 patients signed up for the chance to participate in the clinical trials at Duke (2007-10) that promised a custom-tailored cancer treatment spewed out by a cutting-edge prediction model developed by Anil Potti, Joseph Nevins and their team at Duke. Their model purported to predict your probable response to one or another chemotherapy based on microarray analyses of various tumors. While they are now described as “false pioneers” of personalized cancer treatments, it’s not clear what has been learned from the fireworks surrounding the Potti episode overall. Most of the popular focus has been on glaring typographical and data processing errors—at least that’s what I mainly heard about until recently. Although they were quite crucial to the science in this case,(surely more so than Potti’s CV padding) what interests me now are the general methodological and logical concerns that rarely make it into the popular press. Continue reading

Scientism and Statisticism: a conference* (i)

A lot of philosophers and scientists seem to be talking about scientism these days–either championing it or worrying about it. What is it? It’s usually a pejorative term describing an unwarranted deference to the so-called scientific method over and above other methods of inquiry. Some push it as a way to combat postmodernism (is that even still around?) Stephen Pinker gives scientism a positive spin (and even offers it as a cure for the malaise of the humanities!)[1]. Anyway, I’m to talk at a conference on Scientism (*not statisticism, that’s my word) taking place in NYC May 16-17. It is organized by Massimo Pigliucci (chair of philosophy at CUNY-Lehman), who has written quite a lot on the topic in the past few years. Information can be found here. In thinking about scientism for this conference, however, I was immediately struck by this puzzle: Continue reading

A lot of philosophers and scientists seem to be talking about scientism these days–either championing it or worrying about it. What is it? It’s usually a pejorative term describing an unwarranted deference to the so-called scientific method over and above other methods of inquiry. Some push it as a way to combat postmodernism (is that even still around?) Stephen Pinker gives scientism a positive spin (and even offers it as a cure for the malaise of the humanities!)[1]. Anyway, I’m to talk at a conference on Scientism (*not statisticism, that’s my word) taking place in NYC May 16-17. It is organized by Massimo Pigliucci (chair of philosophy at CUNY-Lehman), who has written quite a lot on the topic in the past few years. Information can be found here. In thinking about scientism for this conference, however, I was immediately struck by this puzzle: Continue reading

Reliability and Reproducibility: Fraudulent p-values through multiple testing (and other biases): S. Stanley Young (Phil 6334: Day#13)

S. Stanley Young, PhD

S. Stanley Young, PhD

Assistant Director for Bioinformatics

National Institute of Statistical Sciences

Research Triangle Park, NC

Here are Dr. Stanley Young’s slides from our April 25 seminar. They contain several tips for unearthing deception by fraudulent p-value reports. Since it’s Saturday night, you might wish to perform an experiment with three 10-sided dice*,recording the results of 100 rolls (3 at a time) on the form on slide 13. An entry, e.g., (0,1,3) becomes an imaginary p-value of .013 associated with the type of tumor, male-female, old-young. You report only hypotheses whose null is rejected at a “p-value” less than .05. Forward your results to me for publication in a peer-reviewed journal.

*Sets of 10-sided dice will be offered as a palindrome prize beginning in May.

Phil 6334 Visitor: S. Stanley Young, “Statistics and Scientific Integrity”

We are pleased to announce our guest speaker at Thursday’s seminar (April 24, 2014): “Statistics and Scientific Integrity”:

S. Stanley Young, PhD

S. Stanley Young, PhD

Assistant Director for Bioinformatics

National Institute of Statistical Sciences

Research Triangle Park, NC

Author of Resampling-Based Multiple Testing, Westfall and Young (1993) Wiley.

The main readings for the discussion are:

- Young, S. & Karr, A. (2011). Deming, Data and Observational Studies. Signif. 8 (3), 116–120.

- Begley & Ellis (2012) Raise standards for preclinical cancer research. Nature 483: 531-533.

- Ioannidis (2005). Why most published research findings are false. PLoS Med 2(8): e124.

- Peng, R. D., Dominici, F. & Zeger, S. L. (2006). “Reproducible Epidemiologic Research” American Journal of Epidemiology 163 (9), 783-789.

“Murder or Coincidence?” Statistical Error in Court: Richard Gill (TEDx video)

“There was a vain and ambitious hospital director. A bad statistician. ..There were good medics and bad medics, good nurses and bad nurses, good cops and bad cops … Apparently, even some people in the Public Prosecution service found the witch hunt deeply disturbing.”

“There was a vain and ambitious hospital director. A bad statistician. ..There were good medics and bad medics, good nurses and bad nurses, good cops and bad cops … Apparently, even some people in the Public Prosecution service found the witch hunt deeply disturbing.”

This is how Richard Gill, statistician at Leiden University, describes a feature film (Lucia de B.) just released about the case of Lucia de Berk, a nurse found guilty of several murders based largely on statistics. Gill is widely-known (among other things) for showing the flawed statistical analysis used to convict her, which ultimately led (after Gill’s tireless efforts) to her conviction being revoked. (I hope they translate the film into English.) In a recent e-mail Gill writes:

“The Dutch are going into an orgy of feel-good tear-jerking sentimentality as a movie comes out (the premiere is tonight) about the case. It will be a good movie, actually, but it only tells one side of the story. …When a jumbo jet goes down we find out what went wrong and prevent it from happening again. The Lucia case was a similar disaster. But no one even *knows* what went wrong. It can happen again tomorrow.

I spoke about it a couple of days ago at a TEDx event (Flanders).

You can find some p-values in my slides [“Murder by Numbers”, pasted below the video]. They were important – first in convicting Lucia, later in getting her a fair re-trial.”

Since it’s Saturday night, let’s watch Gill’s TEDx talk, “Statistical Error in court”.

Slides from the Talk: “Murder by Numbers”: