Introduction & Overview

Introduction & Overview

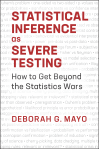

The Meaning of My Title: Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars* 05/19/18

Blurbs of 16 Tours: Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (SIST) 03/05/19

Statistical Inference as Severe Testing: Preface

Excursion 1

EXCERPTS

Tour I Ex1 TI (full proofs)

Excursion 1 Tour I: Beyond Probabilism and Performance: Severity Requirement (1.1) 09/08/18

Excursion 1 Tour I (2nd stop): Probabilism, Performance, and Probativeness (1.2) 09/11/18

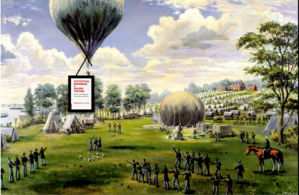

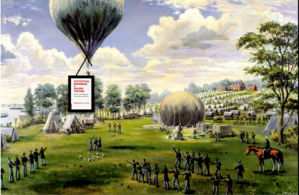

Excursion 1 Tour I (3rd stop): The Current State of Play in Statistical Foundations: A View From a Hot-Air Balloon (1.3) 09/15/18

Tour II Ex1 TII (full proofs)

Excursion 1 Tour II: Error Probing Tools versus Logics of Evidence-Excerpt 04/04/19

Souvenir C: A Severe Tester’s Translation Guide (Excursion 1 Tour II) 11/08/18

MEMENTOS

Tour Guide Mementos (Excursion 1 Tour II of How to Get Beyond the Statistics Wars) 10/29/18

Excursion 2

EXCERPTS

Tour I (full proofs)

Excursion 2: Taboos of Induction and Falsification: Tour I (first stop) 09/29/18

“It should never be true, though it is still often said, that the conclusions are no more accurate than the data on which they are based” (Keepsake by Fisher, 2.1) 10/05/18

Tour II (full proofs)

Excursion 2 Tour II (3rd stop): Falsification, Pseudoscience, Induction (2.3) 10/10/18

Ex 2 TII (Full proofs)

MEMENTOS

Tour Guide Mementos and Quiz 2.1 (Excursion 2 Tour I Induction and Confirmation) 11/14/18

Mementos for Excursion 2 Tour II Falsification, Pseudoscience, Induction 11/17/18

Excursion 3

EXCERPTS

Tour I Ex3 TI (full proofs)

Where are Fisher, Neyman, Pearson in 1919? Opening of Excursion 3 11/30/18

Neyman-Pearson Tests: An Episode in Anglo-Polish Collaboration: Excerpt from Excursion 3 (3.2) 12/01/18

First Look at N-P Methods as Severe Tests: Water plant accident [Exhibit (i) from Excursion 3] 12/04/18

Tour II Ex3 TII (full proofs)

It’s the Methods, Stupid: Excerpt from Excursion 3 Tour II (Mayo 2018, CUP) 12/11/18

60 Years of Cox’s (1958) Chestnut: Excerpt from Excursion 3 tour II. 12/29/18

Tour III Ex3 Tour III(full proofs)

Capability and Severity: Deeper Concepts: Excerpts From Excursion 3 Tour III 12/20/18

MEMENTOS

Memento & Quiz (on SEV): Excursion 3, Tour I 12/08/18

Mementos for “It’s the Methods, Stupid!” Excursion 3 Tour II (3.4-3.6) 12/13/18

Tour Guide Mementos From Excursion 3 Tour III: Capability and Severity: Deeper Concepts 12/26/18

Excursion 4

EXCERPTS

Tour I (Full Excursion 4 Tour I)

Excerpt from Excursion 4 Tour I: The Myth of “The Myth of Objectivity” (Mayo 2018, CUP) 12/26/18

Tour II (Full Excursion 4 Tour II)

Excerpt from Excursion 4 Tour II: 4.4 “Do P-Values Exaggerate the Evidence?” 01/10/19

Tour III (Full proofs of Excursion 4 Tour III)

Tour IV (Full proofs of Excursion 4 Tour IV)

Excerpt from Excursion 4 Tour IV: More Auditing: Objectivity and Model Checking 01/27/19

MEMENTOS

Mementos from Excursion 4: Blurbs of Tours I-IV 01/13/19

Excursion 5

Tour I (full proofs)

(Full) Excerpt: Excursion 5 Tour I — Power: Pre-data and Post-data (from “SIST: How to Get Beyond the Stat Wars”) 04/27/19

Tour II (full proofs)

(Full) Excerpt. Excursion 5 Tour II: How Not to Corrupt Power (Power Taboos, Retro Power, and Shpower) 06/07/19

Tour III (full proofs)

Deconstructing the Fisher-Neyman conflict wearing Fiducial glasses + Excerpt 5.8 from SIST 02/23/19

Excursion 6

Tour I (full proofs) What Ever Happened to Bayesian Foundations?

Tour II (full proofs)

Excerpts: Souvenir Z: Understanding Tribal Warfare + 6.7 Farewell Keepsake from SIST + List of Souvenirs 05/04/19 (full excerpt)

*Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (Mayo, CUP 2018).