.

In the 2025 November/December issue of American Scientist, a group of authors (Ceci, Clark, Jussim and Williams 2025) argue in “Teams of rivals” that “adversarial collaborations offer a rigorous way to resolve opposing scientific findings, inform key sociopolitical issues, and help repair trust in science”. With adversarial collaborations, a term coined by Daniel Kahneman (2003), teams of divergent scholars, interested in uncovering what is the case (rather than endlessly making their case) design appropriately stringent tests to understand–and perhaps even resolve–their disagreements. I am pleased to see that in describing such tests the authors allude to my notion of severe testing (Mayo 2018)*:

Severe testing is the related idea that the scientific community ought to accept a claim only after it surmounts rigorous tests designed to find its flaws, rather than tests optimally designed for confirmation. The strong motivation each side’s members will feel to severely test the other side’s predictions should inspire greater confidence in the collaboration’s eventual conclusions. (Ceci et al., 2025)

1. Why open science isn’t enough Continue reading

A seminal controversy in statistical inference is whether error probabilities associated with an inference method are evidentially relevant once the data are in hand. Frequentist error statisticians say yes; Bayesians say no. A “no” answer goes hand in hand with holding the Likelihood Principle (LP), which follows from inference by Bayes theorem. A “yes” answer violates the LP (also called the strong LP). The reason error probabilities drop out according to the LP is that it follows from the LP that all the evidence from the data is contained in the likelihood ratios (at least for inference within a statistical model). For the error statistician, likelihood ratios are merely measures of comparative fit, and omit crucial information about their reliability. A dramatic illustration of this disagreement involves optional stopping, and it’s the one to which Roderick Little turns in the chapter “Do you like the likelihood principle?” in

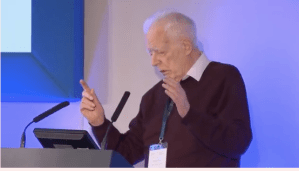

A seminal controversy in statistical inference is whether error probabilities associated with an inference method are evidentially relevant once the data are in hand. Frequentist error statisticians say yes; Bayesians say no. A “no” answer goes hand in hand with holding the Likelihood Principle (LP), which follows from inference by Bayes theorem. A “yes” answer violates the LP (also called the strong LP). The reason error probabilities drop out according to the LP is that it follows from the LP that all the evidence from the data is contained in the likelihood ratios (at least for inference within a statistical model). For the error statistician, likelihood ratios are merely measures of comparative fit, and omit crucial information about their reliability. A dramatic illustration of this disagreement involves optional stopping, and it’s the one to which Roderick Little turns in the chapter “Do you like the likelihood principle?” in  Around a year ago, Professor Rod Little asked me if I’d mind being on the cover of a book he was finishing along with Fisher, Neyman and some others (can you identify the others?). Mind? The book is

Around a year ago, Professor Rod Little asked me if I’d mind being on the cover of a book he was finishing along with Fisher, Neyman and some others (can you identify the others?). Mind? The book is