.

My editorial in Conservation Biology is published (open access): “The Statistical Wars and Intellectual Conflicts of Interest”. Share your comments, here and/or send a separate item (to Error), if you wish, for possible guest posting*. (All readers are invited to a special January 11 Phil Stat Session with Y. Benjamini and D. Hand described here.) Here’s most of the editorial:

The Statistics Wars and Intellectual Conflicts of Interest

How should journal editors react to heated disagreements about statistical significance tests in applied fields, such as conservation science, where statistical inferences often are the basis for controversial policy decisions? They should avoid taking sides. They should also avoid obeisance to calls for author guidelines to reflect a particular statistical philosophy or standpoint. The question is how to prevent the misuse of statistical methods without selectively favoring one side.

The statistical‐significance‐test controversies are well known in conservation science. In a forum revolving around Murtaugh’s (2014) “In Defense of P values,” Murtaugh argues, correctly, that most criticisms of statistical significance tests “stem from misunderstandings or incorrect interpretations, rather than from intrinsic shortcomings of the P value” (p. 611). However, underlying those criticisms, and especially proposed reforms, are often controversial philosophical presuppositions about the proper uses of probability in uncertain inference. Should probability be used to assess a method’s probability of avoiding erroneous interpretations of data (i.e., error probabilities) or to measure comparative degrees of belief or support? Wars between frequentists and Bayesians continue to simmer in calls for reform.

Consider how, in commenting on Murtaugh (2014), Burnham and Anderson (2014 : 627) aver that “P‐values are not proper evidence as they violate the likelihood principle (Royall, 1997).” This presupposes that statistical methods ought to obey the likelihood principle (LP), a long‐standing point of controversy in the statistics wars. The LP says that all the evidence is contained in a ratio of likelihoods (Berger & Wolpert, 1988). Because this is to condition on the particular sample data, there is no consideration of outcomes other than those observed and thus no consideration of error probabilities. One should not write this off because it seems technical: methods that obey the LP fail to directly register gambits that alter their capability to probe error. Whatever one’s view, a criticism based on presupposing the irrelevance of error probabilities is radically different from one that points to misuses of tests for their intended purpose—to assess and control error probabilities.

Error control is nullified by biasing selection effects: cherry‐picking, multiple testing, data dredging, and flexible stopping rules. The resulting (nominal) p values are not legitimate p values. In conservation science and elsewhere, such misuses can result from a publish‐or‐perish mentality and experimenter’s flexibility (Fidler et al., 2017). These led to calls for preregistration of hypotheses and stopping rules–one of the most effective ways to promote replication (Simmons et al., 2012). However, data dredging can also occur with likelihood ratios, Bayes factors, and Bayesian updating, but the direct grounds to criticize inferences as flouting error probability control is lost. This conflicts with a central motivation for using p values as a “first line of defense against being fooled by randomness” (Benjamini, 2016). The introduction of prior probabilities (subjective, default, or empirical)–which may also be data dependent–offers further flexibility.

Signs that one is going beyond merely enforcing proper use of statistical significance tests are that the proposed reform is either the subject of heated controversy or is based on presupposing a philosophy at odds with that of statistical significance testing. It is easy to miss or downplay philosophical presuppositions, especially if one has a strong interest in endorsing the policy upshot: to abandon statistical significance. Having the power to enforce such a policy, however, can create a conflict of interest (COI). Unlike a typical COI, this one is intellectual and could threaten the intended goals of integrity, reproducibility, and transparency in science.

If the reward structure is seducing even researchers who are aware of the pitfalls of capitalizing on selection biases, then one is dealing with a highly susceptible group. For a journal or organization to take sides in these long-standing controversies—or even to appear to do so—encourages groupthink and discourages practitioners from arriving at their own reflective conclusions about methods.

The American Statistical Association (ASA) Board appointed a President’s Task Force on Statistical Significance and Replicability in 2019 that was put in the odd position of needing to “address concerns that a 2019 editorial [by the ASA’s executive director (Wasserstein et al., 2019)] might be mistakenly interpreted as official ASA policy” (Benjamini et al., 2021)—as if the editorial continues the 2016 ASA Statement on p-values (Wasserstein & Lazar, 2016). That policy statement merely warns against well‐known fallacies in using p values. But Wasserstein et al. (2019) claim it “stopped just short of recommending that declarations of ‘statistical significance’ be abandoned” and announce taking that step. They call on practitioners not to use the phrase statistical significance and to avoid p value thresholds. Call this the no‐threshold view. The 2016 statement was largely uncontroversial; the 2019 editorial was anything but. The President’s Task Force should be commended for working to resolve the confusion (Kafadar, 2019). Their report concludes: “P-values are valid statistical measures that provide convenient conventions for communicating the uncertainty inherent in quantitative results” (Benjamini et al., 2021). A disclaimer that Wasserstein et al., 2019 was not ASA policy would have avoided both the confusion and the slight to opposing views within the Association.

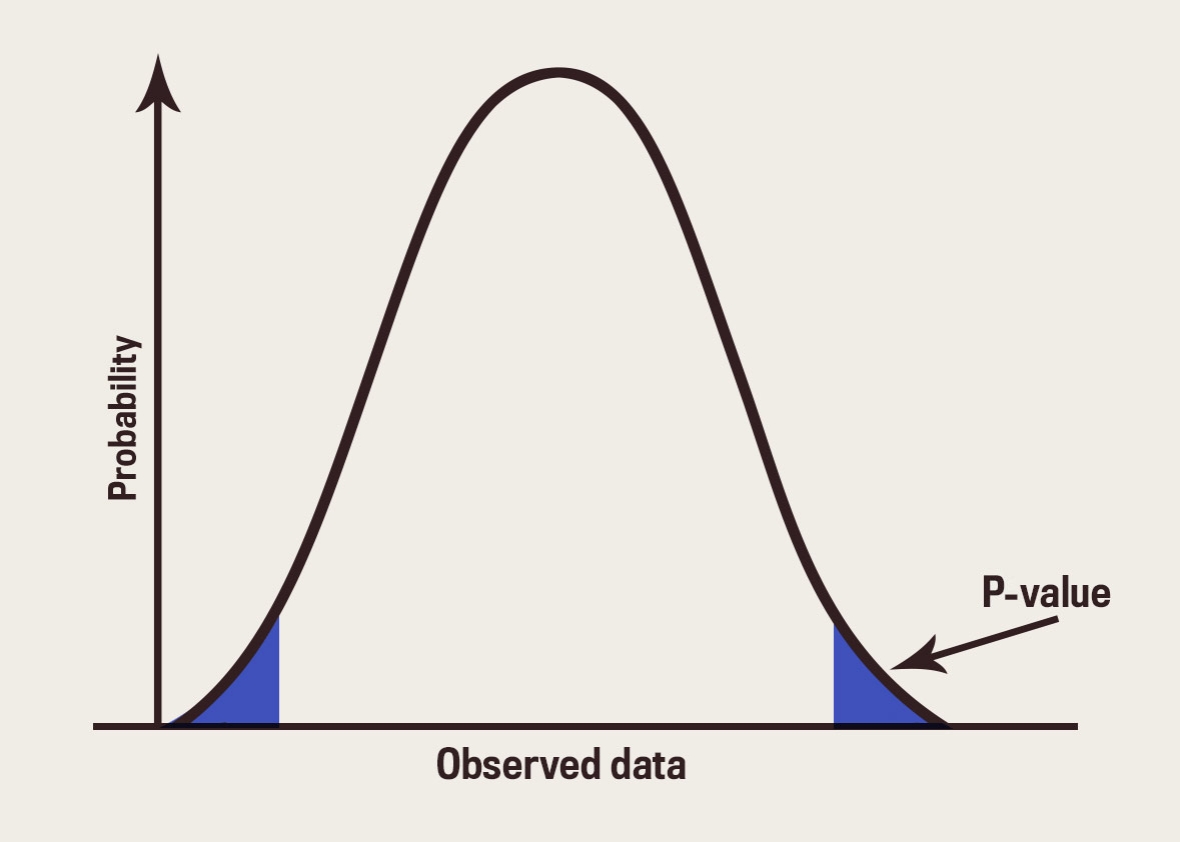

The no‐threshold view has consequences (likely unintended). Statistical significance tests arise “to test the conformity of the particular data under analysis with [a statistical hypothesis] H0 in some respect to be specified” (Mayo & Cox, 2006: 81). There is a function D of the data, the test statistic, such that the larger its value (d), the more inconsistent are the data with H0. The p value is the probability the test would have given rise to a result more discordant from H0 than d is were the results due to background or chance variability (as described in H0). In computing p, hypothesis H0 is assumed merely for drawing out its probabilistic implications. If even larger differences than d are frequently brought about by chance alone (p is not small), the data are not evidence of inconsistency with H0. Requiring a low pvalue before inferring inconsistency with H0 controls the probability of a type I error (i.e., erroneously finding evidence against H0).

…

Whether interpreting a simple Fisherian or an N‐P test, avoiding fallacies calls for considering one or more discrepancies from the null hypothesis under test. Consider testing a normal mean H0: μ ≤ μ0 versus H1: μ > μ0. If the test would fairly probably have resulted in a smaller p value than observed, if μ = μ1 were true (where μ1 = μ0 + γ, for γ > 0), then the data provide poor evidence that μ exceeds μ1. It would be unwarranted to infer evidence of μ > μ1. Tests do not need to be abandoned when the fallacy is easily avoided by computing p values for one or two additional benchmarks (Burgman, 2005; Hand, 2021; Mayo, 2018; Mayo & Spanos, 2006).

The same is true for avoiding fallacious interpretations of nonsignificant results. These are often of concern in conservation, especially when interpreted as no risks exist. In fact, the test may have had a low probability to detect risks. But nonsignificant results are not uninformative. If the test very probably would have resulted in a more statistically significant result were there a meaningful effect, say μ > μ1 (where μ1 = μ0 + γ, for γ > 0), then the data are evidence that μ < μ1. (This is not to infer μ ≤ μ0.) “Such an assessment is more relevant to specific data than is the notion of power” (Mayo & Cox, 2006: 89). This also matches inferring that μ is less than the upper bound of the corresponding confidence interval (at the associated confidence level) or a severity assessment (Mayo, 2018). Others advance equivalence tests (Lakens, 2017; Wellek, 2017). An N‐P test tells one to specify H0 so that the type I error is the more serious (considering costs); that alone can alleviate problems in the examples critics adduce (H0would be that the risk exists).

Many think the no‐threshold view merely insists that the attained p value be reported. But leading N‐P theorists already recommend reporting p, which “gives an idea of how strongly the data contradict the hypothesis…[and] enables others to reach a verdict based on the significance level of their choice” (Lehmann & Romano, 2005: 63−64). What the no‐threshold view does, if taken strictly, is preclude testing. If one cannot say ahead of time about any result that it will not be allowed to count in favor of a claim, then one does not test that claim. There is no test or falsification, even of the statistical variety. What is the point of insisting on replication if at no stage can one say the effect failed to replicate? One may argue for approaches other than tests, but it is unwarranted to claim by fiat that tests do not provide evidence. (For a discussion of rival views of evidence in ecology, see Taper & Lele, 2004.)

Many sign on to the no‐threshold view thinking it blocks perverse incentives to data dredge, multiple test, and p hack when confronted with a large, statistically nonsignificant p value. Carefully considered, the reverse seems true. Even without the word significance, researchers could not present a large (nonsignificant) p value as indicating a genuine effect. It would be nonsensical to say that even though more extreme results would frequently occur by random variability alone that their data are evidence of a genuine effect. The researcher would still need a small p value, which is to operate with a threshold. However, it would be harder to hold data dredgers culpable for reporting a nominally small p value obtained through data dredging. What distinguishes nominal p values from actual ones is that they fail to meet a prespecified error probability threshold.

…

While it is well known that stopping when the data look good inflates the type I error probability, a strict Bayesian is not required to adjust for interim checking because the posterior probability is unaltered. Advocates of Bayesian clinical trials are in a quandary because “The [regulatory] requirement of Type I error control for Bayesian [trials] causes them to lose many of their philosophical advantages, such as compliance with the likelihood principle” (Ryan etal., 2020: 7).

It may be retorted that implausible inferences will indirectly be blocked by appropriate prior degrees of belief (informative priors), but this misses the crucial point. The key function of statistical tests is to constrain the human tendency to selectively favor views they believe in. There are ample forums for debating statistical methodologies. There is no call for executive directors or journal editors to place a thumb on the scale. Whether in dealing with environmental policy advocates, drug lobbyists, or avid calls to expel statistical significance tests, a strong belief in the efficacy of an intervention is distinct from its having been well tested. Applied science will be well served by editorial policies that uphold that distinction.

For the acknowledgments and references, see the full editorial here.

I will cite as many (constructive) readers’ views as I can at the upcoming forum with Yoav Benjamini and David Hand on January 11 on zoom (see this post). *Authors of articles I put up as guest posts or cite at the Forum will get a free copy of my Statistical Inference as Severe Testing: How to Get Beyond the Statistics Wars (CUP, 2018).