Call for papers

PSX Philosophy of Scientific Experimentation 3 (PSX3)

Friday and Saturday, October 5 and 6, 2012

University of Colorado, Boulder

Keynote Speakers: Professor Eric Cornell, University of Colorado, Nobel Prize (Physics, 2001)

Professor Friedrich Steinle, History of Science, University of Berlin

Professor Friedrich Steinle, History of Science, University of Berlin

Experiments play essential roles in science. Philosophers of science have emphasized their role in the testing of theories but they also play other important roles. They are, for example, essential in exploring new phenomenological realms and discovering new effects and phenomena. Nevertheless, experiments are still an underrepresented topic in mainstream philosophy of science. This conference on the philosophy of scientific experimentation, the third in a series, is intended to give a home to philosophical interests in, and concerns about, experiment. Among the questions that will be discussed are the following: How is experimental practice organized, around theories or around something else? How independent is experimentation from theories? Does it have a life of its own? Can experiments undermine the threat posed to the objectivity of science by the thesis of theory-ladenness, underdetermination, or the Duhem-Quine thesis? What are the important similarities and differences between experiments in different sciences? What are the experimental strategies scientists use for making sure that their experiments work correctly? How are phenomena discovered or created in the laboratory? Is experimental knowledge epistemically more secure than observational knowledge? Can experiments give us good reasons for belief in theoretical entities? What role do computer simulations play in the assessment of experimental background? How trustworthy are they? Do they warrant the same kind of inferences as experimental knowledge? Are they theory by other means?

Submissions on any aspect of experiment and simulation are welcome. They should be in the form of an extended abstract (1000 words) submitted through EasyChair https://www.easychair.org/conferences/?conf=psx3 Continue reading

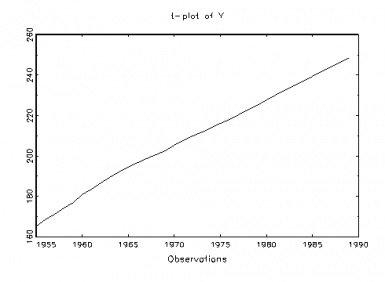

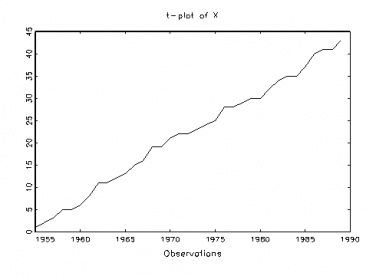

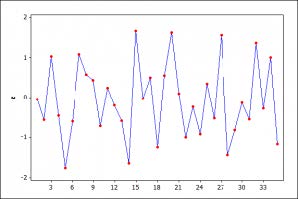

The Nature of the Inferences From Graphical Techniques: What is the status of the learning from graphs? In this view, the graphs afford good ideas about the kinds of violations for which it would be useful to probe, much as looking at a forensic clue (e.g., footprint, tire track) helps to narrow down the search for a given suspect, a fault-tree, for a given cause. The same discernment can be achieved with a formal analysis (with parametric and nonparametric tests), perhaps more discriminating than can be accomplished by even the most trained eye, but the reasoning and the justification are much the same. (The capabilities of these techniques may be checked by simulating data deliberately generated to violate or obey the various assumptions.)

The Nature of the Inferences From Graphical Techniques: What is the status of the learning from graphs? In this view, the graphs afford good ideas about the kinds of violations for which it would be useful to probe, much as looking at a forensic clue (e.g., footprint, tire track) helps to narrow down the search for a given suspect, a fault-tree, for a given cause. The same discernment can be achieved with a formal analysis (with parametric and nonparametric tests), perhaps more discriminating than can be accomplished by even the most trained eye, but the reasoning and the justification are much the same. (The capabilities of these techniques may be checked by simulating data deliberately generated to violate or obey the various assumptions.)