.

We’re going to be discussing the philosophy of m-s testing today in our seminar, so I’m reblogging this from Feb. 2012. I’ve linked the 3 follow-ups below. Check the original posts for some good discussion. (Note visitor*)

“This is the kind of cure that kills the patient!”

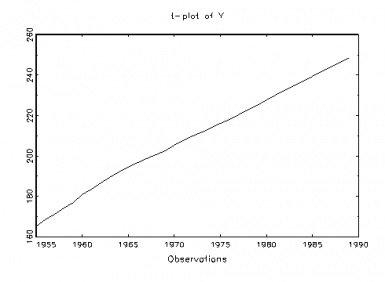

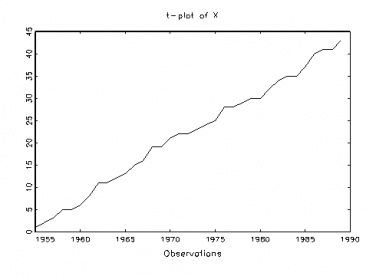

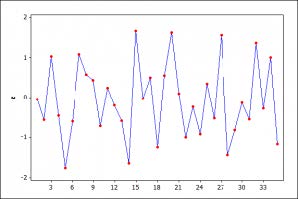

is the line of Aris Spanos that I most remember from when I first heard him talk about testing assumptions of, and respecifying, statistical models in 1999. (The patient, of course, is the statistical model.) On finishing my book, EGEK 1996, I had been keen to fill its central gaps one of which was fleshing out a crucial piece of the error-statistical framework of learning from error: How to validate the assumptions of statistical models. But the whole problem turned out to be far more philosophically—not to mention technically—challenging than I imagined. I will try (in 3 short posts) to sketch a procedure that I think puts the entire process of model validation on a sound logical footing. Continue reading